Fuck this shit, why does every fucking thing need an LLM?

Am I out of touch?

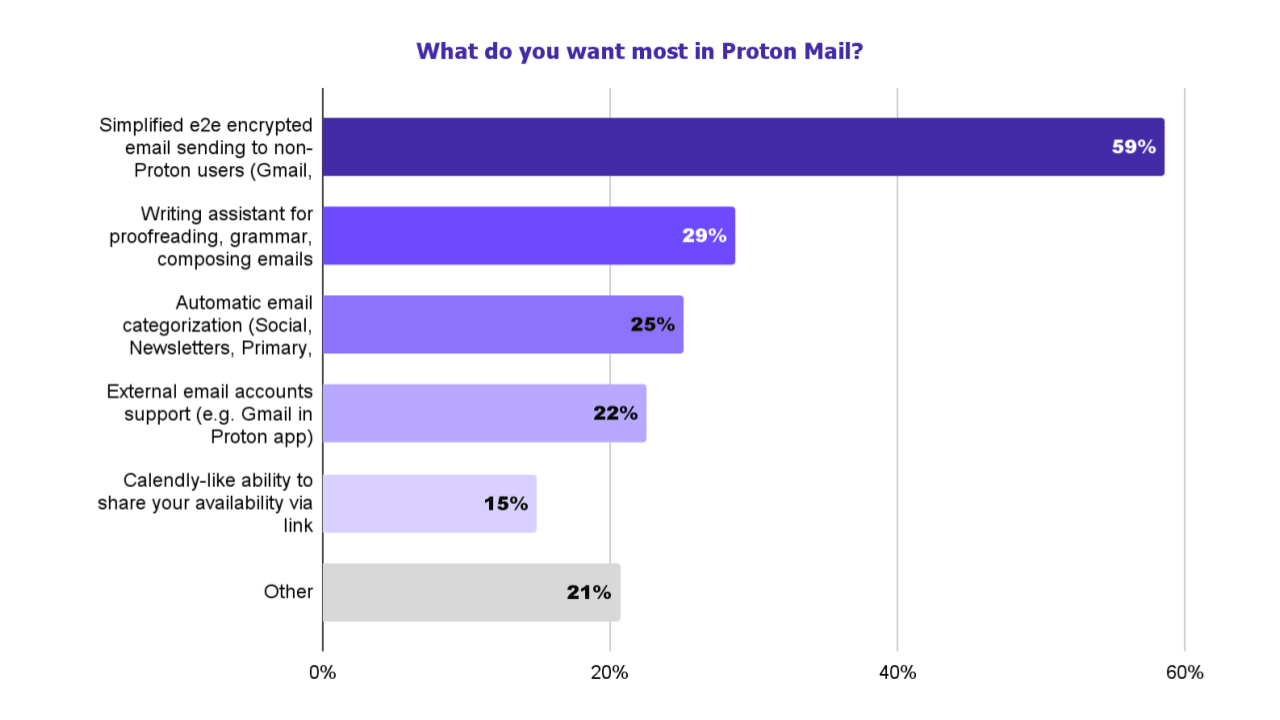

a writing assistant was one of the most requested features in our recent survey

Apparently, I am. People actually want this

For Proton Mail, 59% of respondents want an easier way to send end-to-end encrypted emails to non-Proton users, while 29% want a writing assistant for proofreading, grammar, and composing emails.

Nothing I hate more than not giving a link to the repo

Scribe relies on open source code and models, and is itself open source and therefore available for independent security and privacy audits

Not on their support page specifically for it either

Had to got to Reddit and look at their comments to find out they’re using Mistral

https://reddit.com/comments/1e68sof/comment/ldsbs24

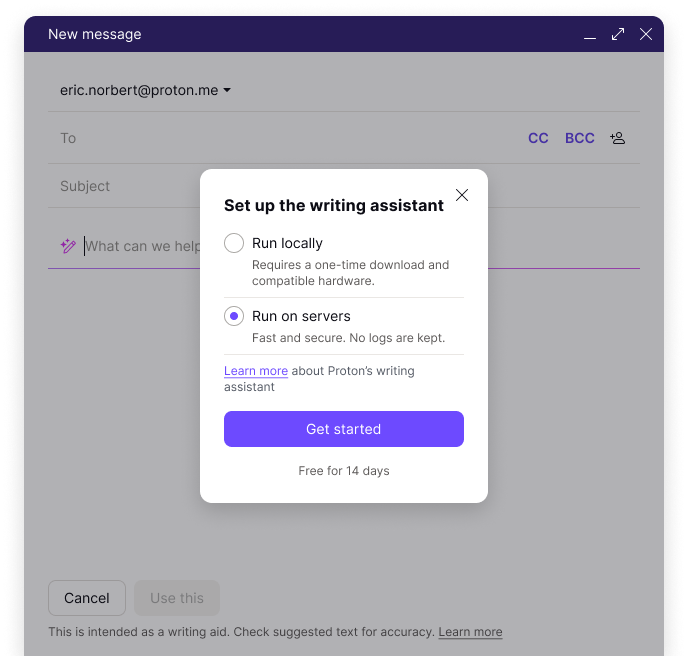

We built Scribe in r/ProtonMail using the open-source model Mistral AI to empower anyone in need of email productivity to use a privacy-respecting alternative to r/ChatGPT or r/GeminiAI that:

❌ doesn’t log or save prompts

⛔️ doesn’t use your data for training

🔎 open-source code that anyone can inspect

🖥️ can be run locally, so your data never leaves your device

See the official announcement here: https://proton.me/blog/proton-scribe-writing-assistanthttps://huggingface.co/mistralai/Mistral-7B-v0.1/discussions/8

Hello, thanks for your interest and kind words! Unfortunately we’re unable to share details about the training and the datasets (extracted from the open Web) due to the highly competitive nature of the field. We appreciate your understanding!

Apparently, I am. People actually want this

Thank you for recognizing this. It gets quite frustrating in threads like these about new AI tools being deployed when people declare “nobody wants this!” And I try to explain that there are actually people that do want it. I find many AI tools to be quite handy.

I tend to get vigorously downvoted at that point, as if that would make the demand “go away” somehow. But sticking heads in sand doesn’t accomplish anything except to make people increasingly out of touch.

I think that the point is it’s entirely pointless building something like this into the email system. It should be a separate system that you can choose to use if you want it. Building it in just opens questions about exactly what they’re doing with your data, despite their assurances.

For me the issue here is, why put so much time and energy into basically rebranding an LLM. I’ve seen LLMs running on RPi and android phones. Why not write a blog post showing how to run LLMs locally with existing tools for the best privacy instead and put more focus on their existing services. It just seems like they’re jumping on the AI bandwagon and charging a premium for an already freely available LLM.

I see some benefits of AI like quality tts when using OSM and stt when transcribing/translating audio but other things like Googles AI answers and Microsofts Copilot leave me scratching my head wondering why consumer would want this

Probably because at the end of the day:

- Most people don’t have the tools or desire to figure out how to run an LLM locally.

- What if I run a local LLM on my PC and I leave my home? Do I now need to learn how to deploy a VPN at home so I always have access? I could do this, but I don’t want to. Oh, you know a model that runs on Android? What if I have an iPhone?

- Proton is a for-profit business that surveyed their customers and got feedback that customers wanted a writing assistant. This one seems the most important.

This is one of those situations. People would eat sweet food if they didn’t know it’s bad for them. In fact they still eat shitloads while they do know it’s bad. Same goes for opiates, and there’s a reason why they are regulated in most of the world (the usa of course is fucked up with this). So yes, let’s boil the fucking oceans so dumb fucks don’t have to learn how to write a fucking email. Let’s say people have to learn what kinds of shit are included with “ai”, they have to learn how much it actually costs, what all of the nft cunts are now doing, and then say if they want something or not. I mean, this is all just fucking gaslighting by now, right? I don’t care what a fucking ignorant shit thinks about mostly anything - but this is a shitty company telling us ‘you want it so hard baby’ so it can pump that ai investor hose SO HARD

Seconded

The thing that pisses me off the most is that they are disingenuous almost to the point of lying in interpreting that survey’s results. They say that 75% of users are interested in GenAI, when actually what they asked is whether people have used any GenAI at all in the recent past. And that still doesn’t mean they want GenAI in Proton. That’s a pretty significant sleight of hand. The more relevant question would have been the first one on what service people want the most. In that case only 29% asked for a writing assistant, which is still not the same thing as a full LLM. The most likely answer to “how many Proton customers want an LLM in Proton Mail” seems to be “few”.

I think the philosophical concept of Open Source can’t really work in ML models unless the training data is open as well. As it stands, these “open source” models are still very much a black box. Nobody was really questioning the implementation of the GPT.

Yeah this would be like Google saying Google Search was “open source” because map-reduce was open, or something.

At least this one is open-source and quite privacy respecting

Yeah, and if that’s the case, it seems like people just hate AI for the sake of it now.

LLM’s are actually good at some things. Just not everything.

LLM’s are actually good at some things.

Just look at the most recent ecological reports about it and combine them with the AI industry growth plans. You’ll get an interesting perspective.

A lot of work has been going into making AIs more energy efficient, both in training and in inference stages. Electricity costs money, so obviously everyone’s interested in more efficient AIs. That makes them more profitable.

Still you can’t improve it that much. It’s like blockchain. Computers always consume a lot of power, no matter how efficient they are.

Funny you should mention blockchains. Ethereum, the second-largest blockchain after Bitcoin, switched from proof-of-work to a proof-of-stake validation system two and a half years ago. That cut its energy use by 99.95%. The “blockchains are inherently a huge waste of energy” narrative is just firmly lodged in the popular view of them now, though, despite it being long proven false.

But that’s really good! And also means that cloud based AI is even worse than blockchain in terms of environmental impact.

It means that even if AI is having more environmental impact right now, there’s no reason to say “you can’t improve it that much.” Maybe you can improve it. As I said previously, a lot of research is being done on exactly that - methods to train and run AIs much more cheaply than it has so far. I see developments along those lines being discussed all the time in AI forums such as /r/localllama.

Much like with blockchains, though, it’s really popular to hate AI and “they waste enormous amounts of electricity” is an easy way to justify that. So news of such developments doesn’t spread easily.

You can improve it hugely. These things are very young.

There was a paper recently about removing the need for matrix multiplication from them which is a hugely expensive operation.

Dedicated hardware is also at a very early stage.

That’s simply not true, there are ways to drastically reduce energy usage while increasing efficiency by offloading the work. A company Mythic AI has worked on an analog processor which sifts through the model. On GPU’s this is the power hungry process, for example a PC with the NVIDIA 3080 will typically run at about 350w under load.

Their claim now that these analog chips use 1/100th of the energy needed for GPU’s. There’s a video from Veritasium that goes over the details. It’s genuinely effective, and that was a few years ago now before whatever potential growth they’ve made with their recent funding. It looks like they actually have products available for inquiry now too.

Doesn’t seem to be at the consumer level yet unless you want to use servers for AI vs. your home computer, but it’s progress. Here’s the thing, I’m not particularly for our current implementation of AI but I don’t think we should be entirely against all of it either. There are clearly plenty of benefits that people see from them, so giving any option possible for companies like Google to severely draw back their energy consumption seems like the reasonable path forward.

The independent drawbacks to LLMs and generative AI don’t mean the technology will stop getting used. It isn’t going anywhere (as in, people will use it) so making it more efficient is the obvious solution to mitigating more waste. Advocate for the prohibition of AI, but it’s honestly more reckless than advocating for making the business’ usage of AI reach a specific energy goal. Forcing these companies to retrofit their servers to run at something ridiculous like 30w per rack is beneficial for them and for us, as they won’t pay as much for energy and we all will have less of it wasted.

Wishful thinking of course, but my point is that energy efficient AI, fortunately or unfortunately, exists and it will continue to. Like we can run “AI” on a raspberry pi 4 which takes what, 9 watts? This technology will get more developed every year, and while I’d be extremely surprised to see a Pi4 on its own running a subjectively useful LLM, I can imagine a setup that uses a Pi and some offloading tech to achieve reasonable results.

I’m personally pretty fine with regular people with computers wanting to use AI in whatever way suits them, as long as they aren’t trying to sell the results. While the energy consumption isn’t ideal, it’s a droplet to the servers these companies take. We should definitely make every effort possible towards increasing the efficiency of this tech, if only because it seems insane to me to pretend like AI will just disappear, or let this huge energy suck exist as we hope it begins to fade.

Tl;Dr offload GPU resources to analog chips, force companies to be more efficient simply because hoping AI is going to disappear is reckless.

They’re really good at burning hug amounts of electricity.

I love how their blog posts say so much and so little at the same time - almost like they’ve been generated by a an LLM lmfao. I read the blog post and still couldn’t find out on what data their model is trained on.

deleted by creator

Do we really need to grow our energy consumption as a society by such a disproportionate amount?

this can run locally on your device which means probably doesn’t consume that much energy

It did when it was trained.

but that was already done. They are using Mistrial which was already trained. Proton didn’t train a new AI for this.

The whole premise of this discussion was about technological progress and growth going by your initial comment. That means refining existing models and training new ones, which is going to cost a lot of energy. The way this industry is going, even privacy conscious usage of open source models will contribute to the insane energy usage by creating demand and popularizing the technology.

What benefit is there to “growth” for its own sake?

In 10 years, 90% of the population that has access to AI will be reduced to a flock without the ability to write a single birthday card.

were you also complaining about calculators?

Kids these days don’t learn cursive writing, it’s destroying their literacy!

I complain about human’s laziness.

I mean, you can’t really change that though. Humans are very lazy

I know at least with art, AI is starting to eat itself with the massive output of content. AI is getting trained on more and more AI content and according to what I read at least its starting to affect new outputs.

Assuming thats true, it at least makes techie sense to me lol, I expect the same would happen to text based AI as well as more and more of the internet becomes exclusively AI generated.

The term “model collapse” gets brought up frequently to describe this, but it’s commonly very misunderstood. There actually isn’t a fundamental problem with training an AI on data that includes other AI outputs, as long as the training data is well curated to maintain its quality. That needs to be done with non-AI-generated training data already anyway so it’s not really extra effort. The research paper that popularized the term “model collapse” used an unrealistically simplistic approach, it just recycled all of an AI’s output into the training set for subsequent generations of AI without any quality control or additional training data mixed in.

“Well curated”

Say these claims are overhyped. Wouldn’t we still reach a point where it’s true, without having humans have to sit down and sift through what’s allowed and what isn’t?

Not necessarily. Curation can also be done by AIs, at least in part.

As a concrete example, NVIDIA’s Nemotron-4 is a system specifically intended for generating “synthetic” training data for other LLMs. It consists of two separate LLMs; Nemotron-4 Instruct, which generates text, and Nemotron-4 Reward, which evaluates the outputs of Instruct to determine whether they’re good to train on.

Humans can still be in that loop, but they don’t necessarily have to be. And the AI can help them in that role so that it’s not necessarily a huge task.

deleted by creator

Just unsubscribed from them. I just use their mail.

they have been going downhill for a while, proton has worked with the feds without resistance bbc article

deleted by creator

at least you can run it locally. Are you just complaining because you hate AI? There’s a community for that, go complain there.

Is a paying customer not allowed to complain that they waste their time on chasing the next popular thing, instead of, I dunno, delivering important features promised years ago?

I might be naive, but given how often its being done I have to imagine that of all the project initiatives at Proton, adding LLMs is a relatively easy integration, when you compare it do developing a native application. Im sure theres been work at proton for a long time on those features, its just that the LLM team did this project quickly.

I do just want to point out that all the other paying customers also deserve the same say as you do, and in the survey linked multiple times in this thread this feature was the second-most requested feature of the Proton team by its users. It would be obtuse of the Proton team to ignore something a full third of its users want, no?

second most requested feature

At 29% lmao. Also it wasn’t a requested feature, it was an answer to a predefined poll.

That’s how mass feature requests work. The company determines a set of possible new features, then ask their customers to vote on them. No one is going to sift through a million different unfeasible requests written by people who have absolutely no clue about development or the business structure. You are utterly delusional if you think that’s how this normally works.

Are you saying people were obligated to select that option in the survey? This reads like you don’t understand how polls work, and condescending to a stranger over an email client’s customer survey results is a really weird thing to do…

New to Proton here. What important features were promised years ago?

Proton Drive Linux desktop client and system integration for Calendar on Android are the main ones I remember.

Thanks. Really interesting they dedicated an entire team to create some AI tools but decided to not create those things you mentioned. A drive program and a calendar program seems way more simple and straight forward.

An AI writing assistant was one of the most requested features in their community survey. Trying to construct an outrage narrative on behalf of the consumer doesn’t really work when the consumer literally voted for this.

Outrage narrative? Are you talking about me, or you?

Are you actually a Proton user, or you just here to shitpost? Because there are features that were promised years ago and then forgotten about, because they aren’t trendy enough.

Yes, I am a paying customer and have been for several years. I didn’t vote for the feature myself and will not use it, but other people did so good for them. The Proton Mail team will be simultaneously working on multiple aspects of the service, and I’m sure there will be updates that I can enjoy in the future. I pay for the service because I want to use it today, not because I am waiting for a promised feature.

A whooping 30% of users requested it

Yes, it was the second most requested feature in the survey. Only one other feature was more requested and it was one that will likely take more time to implement.

not really. You don’t get to decide what the company does if you are a paying customer. I am a paying customer and I want this. If you don’t like it you can just use your money elsewhere