- cross-posted to:

- books@lemmy.world

- cross-posted to:

- books@lemmy.world

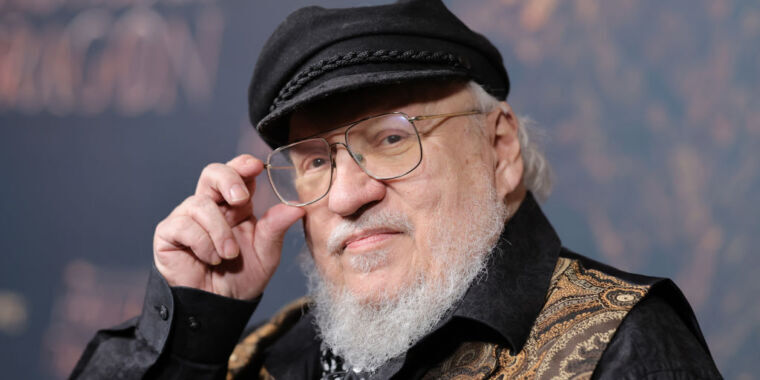

Yesterday, popular authors including John Grisham, Jonathan Franzen, George R.R. Martin, Jodi Picoult, and George Saunders joined the Authors Guild in suing OpenAI, alleging that training the company’s large language models (LLMs) used to power AI tools like ChatGPT on pirated versions of their books violates copyright laws and is “systematic theft on a mass scale.”

“Generative AI is a vast new field for Silicon Valley’s longstanding exploitation of content providers," Franzen said in a statement provided to Ars. "Authors should have the right to decide when their works are used to ‘train’ AI. If they choose to opt in, they should be appropriately compensated.”

OpenAI has previously argued against two lawsuits filed earlier this year by authors making similar claims that authors suing “misconceive the scope of copyright, failing to take into account the limitations and exceptions (including fair use) that properly leave room for innovations like the large language models now at the forefront of artificial intelligence.”

This latest complaint argued that OpenAI’s “LLMs endanger fiction writers’ ability to make a living, in that the LLMs allow anyone to generate—automatically and freely (or very cheaply)—texts that they would otherwise pay writers to create.”

Authors are also concerned that the LLMs fuel AI tools that “can spit out derivative works: material that is based on, mimics, summarizes, or paraphrases” their works, allegedly turning their works into “engines of” authors’ “own destruction” by harming the book market for them. Even worse, the complaint alleged, businesses are being built around opportunities to create allegedly derivative works:

Businesses are sprouting up to sell prompts that allow users to enter the world of an author’s books and create derivative stories within that world. For example, a business called Socialdraft offers long prompts that lead ChatGPT to engage in ‘conversations’ with popular fiction authors like Plaintiff Grisham, Plaintiff Martin, Margaret Atwood, Dan Brown, and others about their works, as well as prompts that promise to help customers ‘Craft Bestselling Books with AI.’

They claimed that OpenAI could have trained their LLMs exclusively on works in the public domain or paid authors “a reasonable licensing fee” but chose not to. Authors feel that without their copyrighted works, OpenAI “would have no commercial product with which to damage—if not usurp—the market for these professional authors’ works.”

“There is nothing fair about this,” the authors’ complaint said.

Their complaint noted that OpenAI chief executive Sam Altman claims that he shares their concerns, telling Congress that "creators deserve control over how their creations are used” and deserve to “benefit from this technology.” But, the claim adds, so far, Altman and OpenAI—which, claimants allege, “intend to earn billions of dollars” from their LLMs—have “proved unwilling to turn these words into actions.”

Saunders said that the lawsuit—which is a proposed class action estimated to include tens of thousands of authors, some of multiple works, where OpenAI could owe $150,000 per infringed work—was an “effort to nudge the tech world to make good on its frequent declarations that it is on the side of creativity.” He also said that stakes went beyond protecting authors’ works.

“Writers should be fairly compensated for their work,” Saunders said. "Fair compensation means that a person’s work is valued, plain and simple. This, in turn, tells the culture what to think of that work and the people who do it. And the work of the writer—the human imagination, struggling with reality, trying to discern virtue and responsibility within it—is essential to a functioning democracy.”

The authors’ complaint said that as more writers have reported being replaced by AI content-writing tools, more authors feel entitled to compensation from OpenAI. The Authors Guild told the court that 90 percent of authors responding to an internal survey from March 2023 “believe that writers should be compensated for the use of their work in ‘training’ AI.” On top of this, there are other threats, their complaint said, including that “ChatGPT is being used to generate low-quality ebooks, impersonating authors, and displacing human-authored books.”

Authors claimed that despite Altman’s public support for creators, OpenAI is intentionally harming creators, noting that OpenAI has admitted to training LLMs on copyrighted works and claiming that there’s evidence that OpenAI’s LLMs “ingested” their books “in their entireties.”

“Until very recently, ChatGPT could be prompted to return quotations of text from copyrighted books with a good degree of accuracy,” the complaint said. “Now, however, ChatGPT generally responds to such prompts with the statement, ‘I can’t provide verbatim excerpts from copyrighted texts.’”

To authors, this suggests that OpenAI is exercising more caution in the face of authors’ growing complaints, perhaps since authors have alleged that the LLMs were trained on pirated copies of their books. They’ve accused OpenAI of being “opaque” and refusing to discuss the sources of their LLMs’ data sets.

Authors have demanded a jury trial and asked a US district court in New York for a permanent injunction to prevent OpenAI’s alleged copyright infringement, claiming that if OpenAI’s LLMs continue to illegally leverage their works, they will lose licensing opportunities and risk being usurped in the book market.

Ars could not immediately reach OpenAI for comment. [Update: OpenAI’s spokesperson told Ars that “creative professionals around the world use ChatGPT as a part of their creative process. We respect the rights of writers and authors, and believe they should benefit from AI technology. We’re having productive conversations with many creators around the world, including the Authors Guild, and have been working cooperatively to understand and discuss their concerns about AI. We’re optimistic we will continue to find mutually beneficial ways to work together to help people utilize new technology in a rich content ecosystem.”]

Rachel Geman, a partner with Lieff Cabraser and co-counsel for the authors, said that OpenAI’s "decision to copy authors’ works, done without offering any choices or providing any compensation, threatens the role and livelihood of writers as a whole.” She told Ars that "this is in no way a case against technology. This is a case against a corporation to vindicate the important rights of writers.”

Holy cow the comments here are so alarming. OpenAI isn’t some non profit trying to better humanity. They are a for profit company making money with a product that is ILLEGALY derived from the work of others. Why are y’all so apathetic? Pretty disheartening.

Lemmy’s opinion on the topic is often very biased towards the views of tech bros rather than writers/creators, at least that’s what I’ve observed. Tech bros have a boner for LLM AIs. They don’t have anything to lose from the development of these AIs, so they don’t seem to understand the concerns of people who do.

This has been a surprise for me. I see this community as pro privacy, anti big tech, and anti capitalism. AI seems like a hot button issue at the confluence of all three, and yet comments suggest many have rose tinted glasses for tech companies with LLMs.

To pile onto that, I was recently disturbed to find I was the dissenting voice in a comment thread by saying that we should not use AI to produce generated CSAM. The top comment defended the idea.

It is impossible to generate CSAM.

That’s the entire goddamn point of calling it CSAM. To stop people mislabeling drawings as CP, when they don’t understand that Bart Simpson doesn’t have the same moral standing as a real human child.

You cannot abuse children who do not exist.

Whether you’re opposed to those generated images anyway is a separate issue.

To be fair, regardless of where you stand on the topic, there is a big difference between a drawing of a child, and a photo realistic recreation of a child that would be indistinguishable from real csam. And if ai was used to make these images in the likeness of real children, which I guarantee many people who consume that sort of stuff would do, then it very much would still be abusive material regardless of whether it’s real or not.

The difference between any made-up image and actual child abuse is insurmountable.

Copy-pasting a real face onto such an image is still not the same kind of problem as child rape.

pro privacy, anti big tech, and anti capitalism

And you’re surprised this correlates against letting authors control information for money?

The software-freedom crowd just wants OpenAI’s models published, so no single company gets to limit access to the distilled essence of all public knowledge. Being against “big tech” has never meant being against… tech. We’re not Amish. We’re open-source diehards. Sometimes that comes through as declaring a vendetta against intellectual property, as a concept. No kidding those folks aren’t lining up behind GRRM, when he declares a robot’s not allowed to learn English from his lengthy and well-known books.

My comment addresses my perception of Lemmy users, not the open source community. These two groups are not the same as is evidenced by the frequent complaints on the front page about open source gatekeeping and quantity of open source topics.

Let me add, I’m also a long time user and contributor of/to open source and free software. I think it’s not correct to assume we’re a single group that all share the same opinion. Best, cloudy1999

These two groups massively overlap, as evidenced by the quantity of open source topics to be complained about.

You don’t get to hand-wave about a group of people, based on their opinions on this subject, and then complain about someone addressing your summary on its merits.

Overlap yes, equal no. I don’t believe either of my comments included a complaint. People are entitled their opinions. My original and new remarks were only observations, no offense intended.

Suggesting this is a contradiction, a betrayal of stated ideals, is a damning insult.

Yeah, I was downvoted to hell in a copyright thread for suggesting that my work had worth and that I wasn’t just freely handing it over to the general public. Sounds like a bunch of 12 year olds that have never created a fucking thing in their life except for some artistic skidmarks in their underwear. These kids have a lot to learn about life.

Look at any discussion around Sync for Lemmy and you’ll get the same thing. Oh a developer created an app that is a flawless experience so far and looks great, and he wants 20 bucks for it? Burn him at the stake!

I’m all for FOSS and stuff but people here lean more entitled than they do “free and open”.

Yeah. I write software for a living and I use open source stuff extensively. I contribute sometimes to open source projects, but not everything can be open source or I’ll be back to flipping burgers.

Personally I write for free, simply because of the joy of it, and what I heard that Google was using people’s Google Drives is free training for their bots, I pulled everything. Not because I want to make a buck, but because Google sure as hell was going to make a buck off of my work without paying me a dime. It’s a little known as principle.

Tech bros have a boner for LLM AIs. They don’t have anything to lose from the development of these AIs, so they don’t seem to understand the concerns of people who do.

On top of this, it’s becoming increasingly clear that many tech bros have never been genuinely moved by a piece of art whether it’s visual or written so they genuinely don’t understand that AI art is devoid of any real emotional impact. AI art just throws together cliches. It reminds me of that shitty AI generated conversation between Plato and Bill Gates when were so many tech bros talking about how “inspiring” it was.

Don’t get me wrong, I love these AI tools coming out but they’re so over hyped sometimes.

The standard for all tech. At the beginning of this I remember them telling me that it was all a new age. Yeah, I’ve been through a few of those now. My favorites were driverless cars and crypto, the same bros told me the same things back then too. Truth is we’ll get a few new really cool things, society will get a bit worse for it, and then we’ll find a new shiny thing.

LLMs and AI aren’t even new, I studied about them in college. 10 years ago. It’s just that we have faster hardware that can finally support them.

LLMs and AI aren’t even new, I studied about them in college. 10 years ago. It’s just that we have faster hardware that can finally support them.

This theoretical sci-fi stuff from barely a decade ago is now real enough to threaten entire industries. Yawn, am I right?

Disagreement isn’t lack of understanding. Some of us are opposed to copyright, entirely. I’m personally not. But: this is not what copyright exists to protect against. And the more it feeds on, the less it resembles any specific work.

Fuck all the replies calling people unfeeling worthless robots over this. Miserable dehumanizing hypocrites.

Personally I think the copyright system should be abolished entirely, alongside capitalism, but I am radical like that

In another thread I saw today somebody said the best way to learn how to make an Android app was to buy chatgpt. That was their advice…

The number of people I’ve seen who think Chatgpt is some sort of authority or reliable source of information is genuinely concerning.

Exactly. It’s called “chat” for a reason. Not wikipediagpt

I just find “intellectual property” copyright to be completely unethical.

I don’t exactly love the outsourcing of human creativity to AI, and personally really hope society continues to value actual human creativity, but the illegality of this stuff simply hasn’t been established.

What is clear is that directly reproducing copyrighted works is illegal. Additionally, a human taking extensive inspiration from copyrighted works is generally perfectly legal. Some of the key questions are:

- Are AI models illegally reproducing copyrighted works?

- Does the sheer ease of use and scale of AI make a meaningful difference such that extensive inspiration is illegal?

- If AIs being extensively inspired by copyrighted works is in fact legal under current law, should it be? Or should legislation be passed explicitly stating that there’s a material difference due to the drastically different speed and scale of, say, telling an AI to write Winds of Winter compared to an actual human struggling for years and years, and thus AI works need to be treated differently?

Personally, I’d say that (1) is a maybe, (2) is a no under current law, and therefore (3) is a yes, and I’d love to see legislation passed clarifying how we as a society want to treat AI works. I’m strongly of the opinion that human creativity is something very special and that it should be protected, but I am concerned about a future where that isn’t valued very much in the face of an AI that knows your personal tastes exactly and can simply generate all the “content” you want in an instant.

Isn’t the point of the lawsuit to find out if it’s illegal? Until a ruling comes down, it’s pretty ambiguous.

I was on board with AI using pre-existing Works to learn off of, but this was before they started hiding their content behind subscription services. Once that happened this became unethical. It’s the difference between having a Pokemon fan game, and trying to publish your own fan mod of Pokemon sword and shield onto the market and expecting Nintendo just be cool with it.

The same people who were shilling for crypto and the nfts are now on board the LLM wagon, and they tend to be active on communities like these.

Yup, I remember listening to them talk about how blockchain was going to change the world, and the hype was exactly the same. There are things that will improve, tech will get a bit easier, but the tech will never live up to the hype. They’ll just find something new to chase after when the shine wears off here.

deleted by creator

He’s written like a dozen novellas since then. I think he just got really sick of writing long stories and has enough money to just do whatever he feels like…which appears to not be finishing GoT.

I think he’s also not sure how to untangle the knots he’s tied in a satisfying way, and the disappointing reception of the tv series ending probably further killed his motivation. Like you said, he’s got plenty of money, so it’s easy to procrastinate untangling his story threads.

Absolutely agreed. GRRM usually takes the Gordian knot solution to untangling those, but I had the exact same thought that he painted himself into a corner with the complexity of the storyline. He should have broken it down into smaller chunks and do 15 books instead of 5.

Honestly, AI could be the tool that helps him break through and finish his series. It’s ridiculous that he’s suing OpenAI instead of using their tools to help him power through and finally finish what he started almost three decades ago.

Maybe he can get ChatGPT to finish it.

so long as i don’t have to read anymore 3 pages about the detail on a character’s garment …yes GRRM her dress is Very Nice, we get that.

Yes, plenty. Just not the one people want.

GRRM ensuring no one can complete his work once he is gone.

I caught him later on in 2006 and jesus christ. still no finish. Feast of Crows was soul sucking to read, I can’t believe i did that to myself.

The first country to kill off LLMs with draconian interpretations of copyright law will simply be handing the industry to other countries on a golden platter. For this reason, I don’t see the U.S. ruling in any sort of way that would damage the AI industry too much. There is simply too much money involved.

If enough countries join in then there will be a barrier to actually making money off of it. Even if you become the leader in AI if your method is just banned in other countries the money won’t be there.

That being said, it doesn’t seem like it’s going to get very far anyway.

Given how we train models (content and math), AIs is not practical to ban/legislate away. While the public applications of AI are for content generation and NLP, as @Rinox alluded to, the military applications are where we are going to see the most focus from the government. As an example, the Lantirn targeting pod uses SVMs to profile aircraft from afar, and it took enormous engineering to get it accurate. Comparable object detection functionality can be obtained with NNs and off-the-shelf GPUs. Countries like China already have “differing philosophies” when it comes to intellectual property rights, so we can remove the largest manufacturing market from the potential list of those who would blanket ban AI. Ditto on any possibility of their military forgoing AI either.

The real problem here is copyright law, which has extended protections far and above the length of time that is reasonable. Had we terms of say 35 years, we could simply train on older material.

It’s not about making money, not only at least. The other reason why the US, China and other countries are obsessed with AI, LLM, NN, ML is because it may prove decisive for their militaries.

What is thought by most militaries today is that we are at a turning point of sorts and the next generation of weapons will be powered by AI in some capacity, and the more we go on, the more AI will be involved (target acquisition, reaction, drone and missile guidance, APS, AA, etc)

They are fighting an uphill battle to get anything. It’s a pretty strong argument that training a model is fair use.

Funny, but I don’t think there’s a very strong argument that training AI is fair use, especially when you consider how it intersects with the standard four factors that generally determine whether a use of copyrighted work is fair or not.

Specifically stuff like:

courts tend to give greater protection to creative works; consequently, fair use applies more broadly to nonfiction, rather than fiction. Courts are usually more protective of art, music, poetry, feature films, and other creative works than they might be of nonfiction works.

Courts have ruled that even uses of small amounts may be excessive if they take the “heart of the work.” … Photographs and artwork often generate controversies, because a user usually needs the full image, or the full “amount,” and this may not be a fair use.

(Keep in mind that many popular AI models have been trained on vast amounts of entire artworks, large sections of text, etc.)

Effect on the market is perhaps more complicated than the other three factors. Fundamentally, this factor means that if you could have realistically purchased or licensed the copyrighted work, that fact weighs against a finding of fair use. To evaluate this factor, you may need to make a simple investigation of the market to determine if the work is reasonably available for purchase or licensing. A work may be reasonably available if you are using a large portion of a book that is for sale at a typical market price. “Effect” is also closely linked to “purpose.” If your purpose is research or scholarship, market effect may be difficult to prove. If your purpose is commercial, then adverse market effect may be easier to prove.

To me, this factor is by far the strongest argument against AI being considered fair use.

The fact is that today’s generative AI is being widely used for commercial purposes and stands to have a dramatic effect on the market for the same types of work that they are using to train their data models–work that they could realistically have been licensing, and probably should be.

Ask any artist, writer, musician, or other creator whether they think it’s “fair” to use their work to generate commercial products without any form of credit, consent or compensation, and the vast majority will tell you it isn’t. I’m curious what “strong argument” that AI training is fair use is, because I’m just not seeing it.

AI training is taking facts which aren’t subject to copyright, not actual content that is subject to it. The original work or a derivative isn’t being distributed or copied. While it may be possible for a user to recreate a copyrighted material with sufficient prompting, the fact it’s possible isn’t any more relevant than for a copy machine. It’s the same as an aspiring author reading all of Martin’s work for inspiration. They can write a story based on a vaguely medieval England full of rape and murder, without paying Martin a dime. What they can’t do is call it Westeros, or have the main character be named Eddard Stork.

There may be an argument that a copy needs to be purchased to extract the facts, but that’s not any special license, a used copy of the book would be sufficient.

AI isn’t doing anything that hasn’t already been done by humans for hundreds of years, it’s just doing it faster.

It’s the same as an aspiring author reading all of Martin’s work for inspiration. They can write a story based on a vaguely medieval England full of rape and murder, without paying Martin a dime. What they can’t do is call it Westeros, or have the main character be named Eddard Stork.

This isn’t about a person reading things and taking inspiration and writing a similar story though. This is about a company consuming copyrighted works to and selling software that is built on those copyrighted works and depends on those copyrighted works. It’s different.

A company is just a person in the legal world. They are consuming copyrighted works just like an aspiring author. They are then using that knowledge to generate something new.

Legally, I think you’re basically right on.

I think what will eventually need to happen is society deciding whether this is actually the desired legal state of affairs or not. A pretty strong argument can be made that “just doing it faster” makes an enormous difference on the ultimate impact, such that it may be worth adjusting copyright law to explicitly prohibit AI creation of derivative works, training on copyrighted materials without consent, or some other kinds of restrictions.

I do somewhat fear that, in our continuous pursuit for endless amounts of convenient “content” and entertainment to distract ourselves from the real world, we’ll essentially outsource human creativity to AI, and I don’t love the idea of a future where no one is creating anything because it’s impossible to make a living from it due to literally infinite competition from AI.

I think that fear is overblown, ai models are only as good as their training material. It still requires humans to create new content to keep models growing. Training ai on ai generated content doesn’t work out well.

Models aren’t good enough yet to actually fully create quality content. It’s also not clear that the ability for them to do so is imminent, maybe one day it will. Right now these tools are really onlyngood for assisting a creator in making drafts, or identifying weak parts of the story.

Models aren’t good enough yet to actually fully create quality content.

Which is why I really hate the fact that the programmers and the media have dubbed this “intelligence”. Bigger programs and more data doesn’t just automatically make something intelligent.

This is such a weird take imo. We’ve been calling agent behavior in video games AI since forever but suddenly everyone has an issue when it’s applied to LLMs.

If I took 100 of the world’s best-selling novels, wrote each individual word onto a flashcard, shuffled the entire deck, then created an entirely new novel out of that, (with completely original characters, plot threads, themes, and messaged) could it be said that I produced stolen work?

What if I specifically attempted to emulate the style of the number one author on that list? What if instead of 100 novels, I used 1,000 or 10,000? What if instead of words on flashcards, I wrote down sentences? What if it were letters instead?

At some point, regardless of by what means the changes were derived, a transformed work must pass a threshold whereby content alone it is sufficiently different enough that it can no longer be considered derivative.

Y’all are missing the point, what you said is about AI output and is not the main issue in the lawsuit. The lawsuit is about the input to AI - authors want to choose if their content may be used to train AI or not (and if yes, be compensated for it).

There is an analogy elsewhere in this thread that is pretty apt - this scenario is akin to an university using pirated textbooks to educate their students. Whether or not the student ended up pursing a field that uses the knowledge does not matter - the issue is the university should not have done so in the first place (and remember, the university is not only profiting off of this but also saving money by shafting the authors).

I imagine that the easiest way to acquire specific training data for a LLM is to download EBooks from amazon. If a university professor pirates a textbook and then uses extracts from various pages in their lecture slides, the cost of the crime would be the cost of a single textbook. In the case of a novel, GRRM should be entitled to the cost of a set of Ice & Fire if they could prove that the original training material was illegaly pirated instead of legally purchased.

Once a copy of a book is sold, an author typically has no say in how it gets used outside of reproduction.

I’m not sure your assessment of the “cost of damages” is really accurate but again, that’s not the point.

The point of the lawsuit is about control. If the authors succeed in setting precedent that they should control the use of their work in AI training, then they can easily negotiate the terms with AI tech companies for much more money.

The point of the lawsuit is not one-time compensation, it’s about the control in the future.

Not even collage art automatically counts as fair use.

I don’t see how this could work.

ChatGPTs output is not a derivative of a specific novel.

As I understand it, it’s not about the output it’s about the input.

Same basic principle as why universities don’t simply give students a pirated copy of the entire textbook.

Same principle why Google can index pretty much all books in existence. They were sued over this and won. Same thing will happen here.

As long as these models are not providing the copyrighted material to their users they should be safe.

@Akisamb yes I think you’re right.

That one was really interesting too. It has put a few more limits on its full text since those days, but I don’t know how much that was a result of the suit.

The authors from my country tried to have a group lawsuit against Google because within my country, if your books are in public libraries, then you get yearly compensation based on how many copies are in circulation.

But, Google and America are both a lot more rich and powerful than a handful of authors from New Zealand, so I don’t know what they though they could achieve.

Universities giving away pirated textbooks is output.

@hypelightfly It’s input into the students’ brains.

And the very concept of “pirated” textbooks is itself monstrous. Textbooks should be free, in universities, schools, and everywhere. I can see some arguments for copyright maybe possibly being somewhat beneficial for artists (with reasonable limits… for five or maybe even ten years, say, and most definitely no longer than the author’s lifespan, and of course not applicable to companies), but copyrighting learning tools can only be harmful to society.

Hmm… if that were true then why would they be expecting $150k per book?

My understanding us that in copyright infringement the liability is the amount of income you have deprived the author of. If you’ve only copied 1 book and not produced derivative works then the loss is the value of the book.

This is why it’s such an interesting case. We think of the LLM as one entity, but another way of looking at it is that it’s a lot of iterations.

The pirated book text files that are doing the rounds with the AI seem to be multiple iterations as well.

One $150 textbook x 1,000 students = $150k.

Even with public libraries, they pay volume licenses for ebooks based on how many “copies” can be lended simultaneously per year. It’s not considered as the library owning one copy when digital files are being lent.

Sorry mate this is just daft.

Did you read the article? Most of it is about derivative works.

LLMs fuel AI tools that “can spit out derivative works: material that is based on, mimics, summarizes, or paraphrases” their works, allegedly turning their works into “engines of” authors’ “own destruction” by harming the book market for them.

They’re trying to claim that they have been financially harmed by the unauthorised use of their work.

Even if LLMs are separate iterations you could train multiple LLMs with one copy of the book - the library is not loaning multiple copies simultaneously.

Correct me if I’m wrong but I was under the impression they don’t yet have a derivative work that’s close enough to clain plagiarism. Without that, they don’t have a leg to stand on.

This is the only part I think could potentially hold water:

Authors should have the right to decide when their works are used to ‘train’ AI. If they choose to opt in, they should be appropriately compensated.”

As for libraries:

the library is not loaning multiple copies simultaneously.

I’m not sure why you’re saying that.

With ebook platforms the business model completely depends on the concept of multiple copies. This isn’t my opinion, it’s just a fact about how they bill libraries. Random stats.

Even with physical copies libraries sometimes buy several (and in my country publishers get special compensation based on how many copies are in public libraries per year)

deleted by creator

It may not calculate well mathematically, if not factually at all, but ChatGPT can sure as hell wax poetically with some direction and input.

But the best I can say is that, for now, ChatGPT should be only be relegated to Fanfics, tbh, lmao…

This is the best summary I could come up with:

Martin, Jodi Picoult, and George Saunders joined the Authors Guild in suing OpenAI, alleging that training the company’s large language models (LLMs) used to power AI tools like ChatGPT on pirated versions of their books violates copyright laws and is “systematic theft on a mass scale.”

They claimed that OpenAI could have trained their LLMs exclusively on works in the public domain or paid authors “a reasonable licensing fee” but chose not to.

But, the claim adds, so far, Altman and OpenAI—which, claimants allege, “intend to earn billions of dollars” from their LLMs—have “proved unwilling to turn these words into actions.”

Saunders said that the lawsuit—which is a proposed class action estimated to include tens of thousands of authors, some of multiple works, where OpenAI could owe $150,000 per infringed work—was an “effort to nudge the tech world to make good on its frequent declarations that it is on the side of creativity.”

On top of this, there are other threats, their complaint said, including that “ChatGPT is being used to generate low-quality ebooks, impersonating authors, and displacing human-authored books.”

We’re having productive conversations with many creators around the world, including the Authors Guild, and have been working cooperatively to understand and discuss their concerns about AI.

The original article contains 1,001 words, the summary contains 207 words. Saved 79%. I’m a bot and I’m open source!

Maybe AI can finish Winds of Winter for him

Can’t be any worse than season 8.

Is this bot going to get sued by boomer luddites, too?

deleted by creator

We are at the point now that we could invent Artificial Intelligence and develop it to a point that it could write the final GoT booK faster than GRRM, and better than HBO.

If I tried to publish a fan game using the source code of Mega Man Battle network, Capcom would be on my ass if you train your artificial intelligence off of the writing styles of popular authors and they don’t see a lick? They should be on yours

Martin is exactly the person this threatens, because what he puts out is ultimately a type of slop. When you are committed to the “gritty realism” tenant of how anyone can die and plot lines might just become irrelevant, sabotaging any coherent notion of themes or meaning beyond that basic premise, what you are left with is basically just fake history and fake politics, a more elaborate version of putting army men and OCs on a map and just kind of vomitting meaningless worldbuilding details and “lore” about them fighting until enough people have died that you call it a book.

AI is largely incapable of effectively conveying themes except in the bluntest manner, but if all you need is to be willing to put writing violence, sexual pandering, arbitrary setting descriptions, and dramatic language, AI is more than up to that task, though you will still need to edit to fix contradictory details.

deleted by creator

Be fair though, as fun of a gimmick as that is at first. It makes a completely boring and pointless story when I can’t get invested in the states when pretty much everyone involved is going to die a pointless death that doesn’t even bring anything about. Sure it’s realistic and dark, but it’s not a good story.

Eventually you just find yourself having to abandon this and just keep a few token protagonists alive like Jon Snow or whoever completely destroying the entire point of what you were trying to do to begin with, because you cannot have a narrative with a bunch of corpses unless your story is about the afterlife.

the limitations and exceptions (including fair use) that properly leave room for innovations

BUT WE CAN’T INNOVATE WITHOUT STEALING YOUR WORK AND FEEDING IT INTO THE CONTRAPTION!!! YOU’RE STIFLING INNOVAAAAAAAATIONNNN!!!

BUT WE CAN’T INNOVATE WITHOUT STEALING YOUR WORK AND FEEDING IT INTO THE CONTRAPTION!!! YOU’RE STIFLING INNOVAAAAAAAATIONNNN!!!the only good use of IP law is when it’s being used to cudgel capitalist hubris, get fucked

‘move fast and break things’ enjoyers when they’re served papers since it turns out the thing they broke is the law

yeah… the quaility of comment isn’t very high on this topic. Sure, George boy, summer prince, should go write right now and give the world the story he promised, but this goes far, far beyond that. Take your heads out of your butt, people.

Solid point but I’m afraid it’s mostly shouting at the sun for rising at this point.

Brave new world, like it or not.

I’m just one guy and my opinions don’t matter, but uh… boo fucking hoo.

The fuck is an English language AI supposed to be trained on, if not popular examples of the English language? Churning the entire global corpus down to a mess of algebra is extremely transformative. Nevermind the end goal is a generalized program to produce anything, in limitless quantities, based on high-level descriptions and incomplete examples.

We can’t even offer to use texts from thirty-odd years ago, in the public domain… because copyright maximalism like this shit right here have ruined the public domain. Surprisingly - A Game Of Thrones would still be in-copyright. It’s from 1996. But there’s another generation of living authors, and a whole mess of dead people, who haven’t written much since well before then, and made quite a lot of money off what they did. That culture belongs to its audience now. That’s what the money was for.

And that’s fine, but they’re owed royalties for it. If you create something, you have the right to charge for it, until we leave capitalism behind and move towards the federation, then people need to pay for things like food. GRR is fun to poke fun at, but think of all the college thesis or papers that were shamelessly stolen from individuals and can be used to create new ones. Those people deserve to eat for what they made.

If copyright needs to change then let’s update copyright, but it’s not fair to blame the people who created them is stuff for wanting what they’re owed. As you point out, it would be worthless without what they contributed, and so by that, they have worth and value they are owed.

Stolen! Theft! Robbery!!!

Fuck that. They used publicly-available documents, and they used them to measure letter frequencies. The model just guesses what letter comes next. That’s it. The more English text we shovel in, the more generic the model gets.

You don’t pay royalties on every work you’ve ever read, before writing these comments.

When this technology is working properly, that’s the level of impact each work has.