Moderation is work. Trolls traumatize. Humans powertrip. All this could be resolved via AI.

AI is extremely fallible and often makes mistakes, so no.

removed by AI moderator

removed by AI moderator

removed by AI moderator

The moderation impossibility theorem says that your idea will fail. Also, what do you think AI is? People are keen to say “AI”, but they’re incredibly tentative about providing any details.

More importantly, what problem do you think you’re solving? We all agree that trolling and power tripping occur, but what specifically are you trying to address? I’m not sure you know, and this is really important.

Ai moderation would lead to every comment being prompt injection

The AI used doesn’t necessarily have to be an LLM. A simple model for determining the “safety” of a comment wouldn’t be vulnerable to prompt injection.

“Confidently Incorrect” describes terrible moderators and AI.

Oh nice phrase. Synonymous with smugnorant.

Wisdom and ignorance look alike in that there is a dearth of uncertainty.

Moderation is work. Trolls traumatize. Humans powertrip.

Correct.

All this could be resolved via AI.

Incorrect, for all the same reasons that facial recognition in ‘AI’ is unethical. All

theftboxesadversarial networks are built by humans, most of whom in the ‘AI’ space come standard-equipped with built in racist biases. You see it all the time in facial recognition algorithms that couldn’t tell the difference between a hundred Black people if you ran 'em all side by side. The same thing would happen with AI moderators; they will more likely than not moderate to right-wing white sensibilities, over-target and powertrip on ethnic minorities, and only really contribute to the general ‘apartheid-supporter’ vibe that most of the western internet has.tl;dr please stop going to bat for theftboxes and the techbro STEMlords who build them.

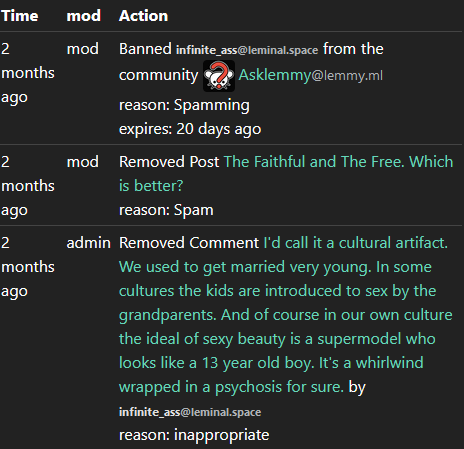

oh hay look. Of course this is some weirdo who spams creep shit like this all over.

What the unholy fuck

every time one of these creeps comes in concern trolling with some big bold idea for “fixing” problems with moderation it’s because they are a creep who got banned for being a creep.

Also of course one of their posts is in there removed for NSFW and I ain’t even gonna look at the context on that one. The lemmy.ml modlog on this guy is an entire page long. On other instances it gets even longer.

Good looking out; I wasn’t even THINKING about modlogging this guy 'cause I really only took him for the average techbro

More fools me ig lmfao

lmao we broke him he’s just whining about safe spaces now.

Fascists always show their whole ass so easily.

Scratch a techbro, a lolbert bleeds

Removed by mod

This is a safe space for us, because we judiciously ban racist gooner brain fascists and libs like you.

Removed by mod

Shut the fuck up loser.

Annnnnnnd we’re done. Y’know, it’s hilarious: this is the exact kind of thing I expect a theftbox moderator to leave alone.

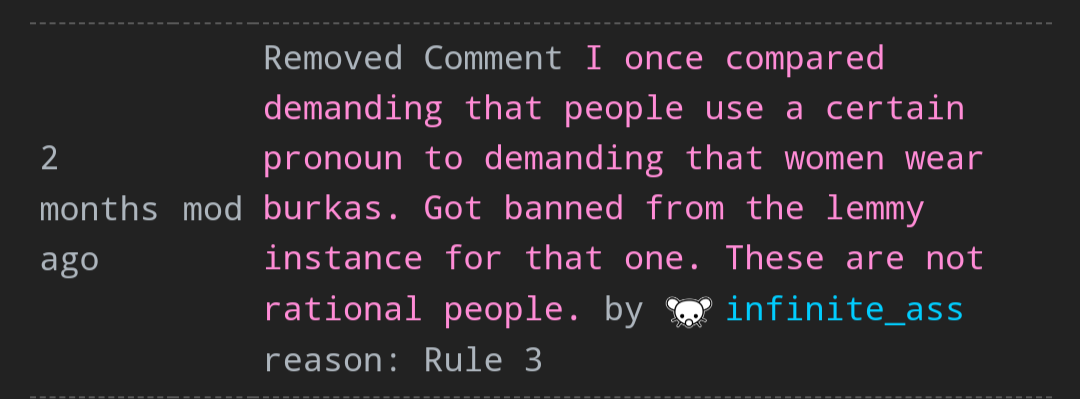

I scrolled through the modlog on their home instance and like clockwork…

CW: transphobia

What if it worked 90%? It doesn’t need to be perfect, just better.

I’d like to see an experiment.

What if it worked 90%? It doesn’t need to be perfect, just better.

An injustice against one of us is an injustice against all of us. 90% isn’t good enough. Hell, 99% isn’t good enough.

But human moderators aren’t perfect either. And they are often biased.

Human moderators who tweak the AI settings are still biased. So you haven’t solved any problem by throwing AI in the middleof it all.

But they can be checked and balanced by other moderators unless it’s a one mod/one admin system; in which case then it’s just a personal fief. Where’s the checks and/or balances for theftbox moderation?

Y’know, if I had a physical watch, I’d be looking at my wrist really condescendingly right now.

AIs can be checked too. And judged, tweaked, etc. Obviously

Groups of moderators can be just as biased as individual moderators. More so even. Given the amplifying effects of echo chambers.

Go look at YouTube, they are already doing it over there.

And it’s horrible. Sometimes my comments are taken down automatically, but YouTube never tells me why, so I don’t know what I need to change, and it’s even hard to find out if my comments have been taken down. The fastest way is for me to write a comment and then wait 10 seconds and then try to edit it.

You’re asking for something better but what’s your baseline? What are you measuring? What’s your metric? How would you know if it got better, and more importantly, how would we as a user base in general know if it got better?

I sure as shit wouldn’t. Did you even read any of what frauddogg just said? Genuine question because they explained pretty clearly why it is a terrible and stupid idea.

Yes I read it. I just don’t think it significates.

And this is something you should reexamine about yourself then if you don’t understand the significance of racism and other bigoted biases in AI.

I am pretty much done arguing with you because you’re a creep and a troll.

significates

Nice word-a-day calender, creepazoid

You don’t even want to see an experiment? But that’s the pudding.

I repeat. Did you actually read any of the concrete argument laid out before you or are you just being willfully obtuse.

I could see it function very well as an aid in moderation but not any type of solution like most things with AI is today.

In the case of Lemmy and other defederated social media platforms there’s going to be the usual cost hindrance and then the ethical side of it with excessive electricity usage and training data.

Disregarding that, as most know and everyone should know, AIs are not to be considered reliable or accurate ever. They will falsely flag and give false positives to potential comments and posts and images.

However, having an AI aggregate a list of potential bad comments and posts, then have a user manually checking the results, could help with moderation efficiency. Because how many users actually report comments and posts? How many do mods actually miss out on? There’s a lot of content and limited time.

I’ve read about people being automatically banned by AI for saying something along the lines of “I hate burritos” because it had the word “hate” in it, so the AI judged their comment as hate speech and auto-banned them even though they were talking about food or a videogame character. AI is not very good at reading context and the “A” in “AI” is an important detail here.

If you’re referring to the data models we have now (as in, not AGI), it’s a solid no for a whole host of reasons.

As it is, it is not intelligent. It is capable of structuring immense datasets and identifying patterns throughout said datasets, but it is incapable of comprehending them at a conceptual level. Even if it can mimic the verbal patterns of context, nuance, humour, sarcasm, irony and even coded speech, it is not capable of understanding any of them. It is not an intelligence as we know and understand it, it’s just a really, really complex math equation.

As it is, all AI is still primarily run by a human consciousness. It cannot decide for itself what to do, it has to be pre-programmed. This means that any biases the human programming said AI might have will be transferred to the program itself given the immensity of data it is meant to process, so you’re right back at human fallibility. At best, contemporary AI is to manual moderation what a chainsaw is to chopping down trees with an axe - just an implement to aid humans in doing exactly what they did before, but maybe faster. That’s it.

deleted by creator

Lol. Imagine being the last human. Living in a bunker. Facebooking with your “friends”

More “accurate” or otherwise, moderating is community engagement. We cultivate our communities by posting relevant content and removing what we find unacceptable. What are we doing if we are not doing both? Allowing a computer to sort the former and the latter? No thank you.

It’s absurd to give any amount of power over people, however trivial, to a thing which is incapable of thought.

Well giving that power to a human isn’t so great either, clearly.

We already use text filters as a moderation bot. So we’re just looking at improving the bot.

Maybe we’re just looking for a way for the bot to recognize more complex patterns.

Aren’t there already some automated mod tools working to delete CSAM and shit? That’s a form of AI.

But all moderation problems you identify (work, biases) would not fully go away with AI moderation. Someone has to build and manage those tools (work) and train them on how to moderate (incorporating their biases as they do so).

Yes, as soon as we actually invent AI.

The Large Language Models we have now aren’t really it. When we have programs which can come to a well reasoned decision and actually explain the logic of said decision, then we’ll start having something approaching AI. For now, it’s just a well directed random number generator.I’m just curious how this would differ from automatic moderating tools we already have. I know moderating actually can be a traumatic job due to stuff like gore and CSEM, but we already have automatic filters in place for that stuff, and things still slip through the cracks. Can we train an AI to recognize it when it hasn’t already been put into a filter? And if so, wouldn’t it hit false positives and require an appeal system, which could still be used to traumatize people?