I’m trying to better understand hosting a Lemmy Instance. Lurking discussions it seems like some people are hosting from the Cloud or VPS. My understanding is that it’s better to futureproof by running your own home server so that you have the data and the top most control of hardware, software etc. My understanding is that by hosting an instance via Cloud or VPS you are offloading the data / information to a 3rd party.

Are people actually running their own actual self-hosted servers from home? Do you have any recommended guides on running a Lemmy Instance?

Selfhosting is the act of hosting applications on “hardware you control”. That could be rented or owned, its the same to us. You could go out and buy a server to host your applications but there a few issues that you might run into that could prevent you from simply standing up a server rack in your spare room. From shitty ISPs to lack of hardware knowledge there are plenty of reasons to just rent a VPS. Either way youre one of us :)

But you don’t control the hardware if you run it on a VPS?

You control the hardware you are provisioned and the software you run on it, which is enough for me. Unless you’re looking for a job in the server adminstration/maintenance field the physical hardware access component of it matters less IMO

You definitely don’t control the hardware. Someone else at some remote server farm or something does.

Some of them offer what they call bare metal provisioning and I wonder if some even offer ILOM type access. That’s pretty much control of the hardware for me. Just that you can’t plug in a disk or a memory stick.

If your server has IPMI, there’s little difference between being there in person and not.

Self hosting basically means you are running the server application yourself. It doesn’t matter if it’s at home, on a cloud service or anywhere else.

I wouldn’t recommend hosting a social network like lemmy, because you would be legally responsible for all the content served from your servers. That means a lot of moderation work. Also, these types of applications are very demanding in terms of data storage, you end up with an ever growing dataset of posts, pictures etc.

But self hosting is very interesting and empowering. There are a lot of applications you can self host, from media servers (Plex, Jellyfin), personal cloud (like Google Drive) with NextCloud, blocking ads with pihole, sync servers for various apps like Obsidian, password manager BitWarden etc. You can even make your own website by coding it, or using a CMS platform like WordPress.

Check the Awesome Self-hosted list on GitHub, has a ton of great stuff.

And in terms of hardware, any old computer or laptop can be used, just install your favorite server OS (Linux, FreeBSD/OpenBSD, even Windows Server). You can play with virtualization too if you have enough horsepower and memory with ESXI or Proxmox, so you can run multiple severs at once on the same computer.

Me, yes. But it’s still selfhosting if you do it on a VPS. And probably easier, too. I mainly do it at home, because I can have multiple large harddisks this way. And storage is kind of expensive in the cloud.

Depending on where you are, a hard drive that runs 24/7 can cost you quite a bit of money (6$/month or even more for just the hard drive). If you consider the upfront cost of a hard drive, the benefit of hosting at home gets even smaller. Nvme is where you really save money hosting at home. Personally I do both, because cloud is cheap and you can have crazy bandwiths.

I know. You pretty much need to know what you’re doing. And do the maths. Know the reliability (MTBF/MTTF) and price. Don’t forget to multiply it by two (as I forgot) because you want backups. And factor in cost of operation. And corresponding hardware you need to run to operate those hdds. My hdd spins down after 5 minutes. I live in Europe and really get to pay for electricity as a consumer. A data center pays way less. My main data, mail, calendar and contacts, OS, databases and everything that needs to be there 24/7 fits on a 1TB solid state disk that doesn’t need much energy while idle. So the hdd is mostly spun down.

Nonetheless, I have a 10TB hdd in the basement. I think it was a bit less than 300€ back when I bought it a few years ago. But I can be mistaken. I pay about 0.34€/kWh for (green) electricity. But the server only uses less than 20W on average. That makes it about 4€ per month in electricity for me. And I think my homeserver cost me about 1000€ and I’ve had it since 2017. So that would be another ~15€ per month if I say I buy hardware for ~1100€ every 6 years. Let’s say I pay about 20€/month for the whole thing. I’m not going to factor in the internet connection, because I need that anyways. (And I probably forgot to factor in I upgraded the SSD 2 times and bought additional RAM when it got super cheap. And I don’t know the reliability of my hdds…)

I also have a cheap VPS and they’d want 76,27€/month … 915.24€ per year if I was to buy 10TB of storage from them. (But I think there are cheaper providers out there.) That would have me protected against hard disk failures. It’ll probably get cheaper with time, I can scale that effortlessly and the harddisks are spun up 24/7. The harddisks are faster ones and their internet connection is also way faster. And I can’t make mistakes like with my own hardware. Maybe having a hdd fail early or buy hardware that needs excessive power. And that’d ruin my calculation… In my case… I’m going with my ~20€/month. And I hope I did the maths correctly. Best bang for the buck is probably: Dont have the data 24/7 available and just buy an external 10TB hard drive if your concern is just pirating movies. Plug it in via USB into whatever device you’re using. And make sure to have backups ;) And if you don’t need vast amounts of space, and want to host a proper service for other people: just pay for a VPS somewhere.

You forgot something in your calculations, you don’t need a complete VPS for the *arrs. App hosting/seedboxes are enough for that and you can have them for very, very cheap.

Ahem, I don’t need a seedbox at all. I use my VPS to host Jitsi-Meet, PeerTube and a few experiments. The *arrs are for the people over at !piracy@lemmy.dbzer0.com

That you probably need a VPS for, yes.

I’d say there are levels to selfhosting. Hosting your stuff on the cloud is selfhosting, but hosting it on your own hardware is a more “pure” way of doing it imo. Not that it’s better, both have their advantages, but it’s certainly a more committed to the idelal

I don’t know what hosting on the VPS should be called but it is definitely not self hosting. Since you are hosting your services on someone else’s servers. Didn’t it used to be called colo or something like that.

I thought colo was your hardware in someone else’s data center.

For me though a VPS is still self hosting because you own your applications data and have control over it.

You’re less beholden to the whims of a company to change the software or cut you off. With appropriate backups you should be able to move to a new cloud provider fairly easily.

I disagree. Selfhosting is not specific to hardware location for me. My own git server is definitely selfhosted, VPN, and so on.

I agree that having your own hardware at home is a more pure way of doing it, but I’d just call that a Homelab. I personally just combine both.

deleted by creator

Yup, it’s more like self administration or something like that

actually have a server at home

I haven’t got any piece of hardware that was sold with the firstname “Server”.

But there’s this self-built PC in my room that’s running 24/7 without having to reboot in several years…

Well technically a “server” is a machine dedicated to “serving” something, like a service or website or whatever. A regular desktop can be a server, it’s just not built as well as a “real” server.

There is though reasons to stray from certain consumer products for server equipment.

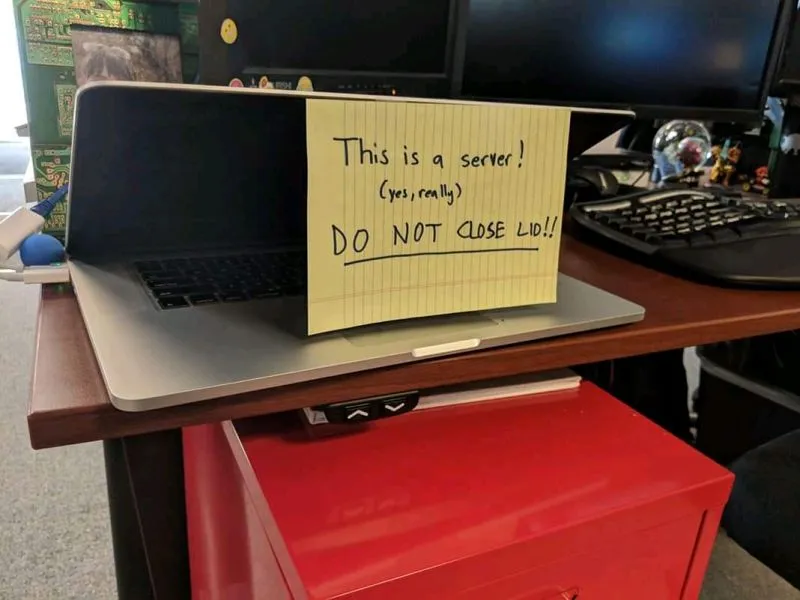

Yeah I’d stay away from Mac too… but seriously most modern laptops can disable any sleep/hibernation on lid close

My go to lately is Lenovo tiny, can pick them up super cheap with 6-12 month warranties, throw in some extra ram, a new drive, haven’t had any fail on me yet

You should think before releasing dangerous information on the internet!

You can get a 2core 8GB / 240GB for 75€!!

Uh oh, I think I’ll have to buy one now…

This is my little setup at the moment. Each is 8500t CPU, 32gb ram, 2tb nvme and 1tb SATA SSD all running in a proxmox cluster

Edit: also check out Dell micro or the hp… Uh I want to say it’s g6 micro? You might need to search for what is actually called

Heh, this is awesome 😅

Thanks :D the frame and all parts are self designed and 3d printed… was a fun project

The whole thing runs from just 2 power cables with room for another without adding any extra power cables

Not at all overkill? :-D

Future proofing or is it really used ? I don’t know proxmox, is it some docker launcher thingy?

Very cool anyways!

Proxmox is like esxi, it lets you setup virtual machines. So you can fire up a virtual Linux machine and allocate it like 2gb ram and limit it to 2 cores of the CPU or give it the whole lot depending on what you need to do

Having them in a cluster let’s them move virtual machines between the physical hardware and have complete copies so if one goes down the next can just start up

It is a little overkill, I’m probably only using about 20% of its resources but it’s all for a good cause. I’m currently unable to work due to kidney failure but I’m working towards a transplant. If I do get a transplant and can return to work, being able to say “well this is my home setup and the various things I know how to do” looks a lot better than “I sat on my ass for the last 4 years so I’m very rusty”

This whole setup cost me about $1000aud and uses 65-70w on average

Lenovo tiny

Doesn’t that mean, tiny fans howling all day long?

Only if you’ve got it cranking all day. I’ve got a couple of Tiny (they’re Micro, which is the same thing) systems that are silent when idle and nearly silent when running less than a load avg of 5. It’s only if I try to spin up a heavy, CPU-bound process that their singular fan spins fast enough to be noticable.

So don’t use one as a Mining rig, but if you want something that runs x64 workloads at 9-20 watts continuously, they’re pretty good.

Even running at full speed mine are pretty quiet but I also have 80mm silent low rpm fans blowing air across them too which seems to help

I also recently went through with fresh thermal paste

Just set it to “do nothing” when lid is closed. That’s all.

100%, and this is why businesses don’t use laptops as servers… typically 😂.

FWIW, this free app solves for that issue well; I have several clammed Macs running it right now:

just break the screen off. call it a headless sever.

How do you install security updates etc without restarting?

Linux servers prompt you do restart after certain updates do you just not restart?

Enterprise distributuions can hot-swap kernels, making it unnecessary to reboot in order to make system updates.

Microsoft needs to get its shit together because reboots were a huge point of contention when I was setting up automated patching at my company.

Good luck with that, I have all reboot options off but yesterday it just rebooted like that. Thanks MS.

The right way ™ is to have the application deployed with high availability. That is every component should have more than one server serving it. Then you can take them offline for a reboot sequentially so that there’s always a live one serving users.

This is taken to an extreme in cloud best practices where we don’t even update any servers. We update the versions of the packages we want in some source code file. From that we build a new OS image contains the updated things along with the application that the server will run and it’s ready to boot. Then in some sequence we kill server VMs running the old image and create news ones running the new. Finally the old VMs are deleted.

You can just restart… with modern SSDs it takes less than a minute. No one is ging to have a problem with 1 minute downtime per month or so.

install security updates etc without restarting?

Actually I am lazy with updates on the “bare metal” debian/proxmox. It does nothing else than host several vm’s. Even the hard disks belong to a vm that provides all the file shares.

Do you have any recommended resources for getting started? I do have a secondary PC…

First, you need a use-case. It’s worthless to have a server just for the sake of it.

For example, you may want to replace google photos by a local save of your photos.

Or you may want to share your movies accross the home network. Or be able to access important documents from any device at home, without hosting them on any kind of cloud storage

Or run a bunch of automation at home.

TL;DR choose a service you use and would like to replace by something more private.

Get a copy of vmware (esxi) or proxmox and load it on that secondary pc.

Proxmox absolutely changed the game for me learning Linux. Spinning up LXC containers in seconds to test out applications or simply to learn different Linux OSs without worrying about the install process each time has probably saved me days of my life at this point. Plus being able to use and learn ZFS natively is really cool.

Ive been using esxi (free copy) for years. Same situation. Being able to spin up virtual machines or take a snapshot before a major change has been priceless. I started off with smaller nuc computers and have upgraded to full fledged desktops.

recommended resources for getting started?

I don’t know where to start today, honestly.

I started with books a long time ago:

https://www.amazon.com/Algorithms-Data-Structures-Niklaus-Wirth/dp/0130220051

https://www.amazon.de/-/en/Andrew-S-Tanenbaum/dp/0132126958

https://www.amazon.de/Programming-Language-Prentice-Hall-Software/dp/0131103628

The simple way is to Google ‘yunohost’ and install that on your spare machine, then just play around with what that offers.

If you want, you could also dive deeper by installing Linux (e.g.Ubuntu), then installing Docker, then spin up Portainer as your first container.

Years? Lol you should update that software.

Well, there are specific hardware configurations that are designed to be servers. They probably don’t have graphics cards but do have multiple CPUs, and are often configured to run many active processes at the same time.

But for the most part, “server” is more related to the OS configuration. No GUI, strip out all the software you don’t need, like browsers, and leave just the software you need to do the job that the server is going to do.

As to updates, this also becomes much simpler since you don’t have a lot of the crap that has vulnerabilities. I helped manage comuter department with about 30 servers, many of which were running Windows (gag!). One of the jobs was to go through the huge list of Microsoft patches every few months. The vast majority of which, “require a user to browse to a certain website” in order to activate. Since we simply didn’t have anyone using browsers on them, we could ignore those patches until we did a big “catch up” patch once a year or so.

Our Unix servers, HP-UX or AIX, simply didn’t have the same kind of patches coming out. Some of them ran for years without a reboot.

“Self-hosted” means you are in control of the platform. That doesn’t mean you have to own the platform outright, just that you hold the keys.

Using a VPS to build a Nextcloud server vs using Google Drive is like the difference between leasing a car and taking a taxi. Yes, you don’t technically own the car like you would if you bought it outright, but that difference is mostly academic. The fact is you’re still in the driver’s seat, controlling how everything works. You get to drive where you want to, in your own time, you pick the music, you set the AC, you adjust the seats, and you can store as much stuff in the trunk as you want, for as long as you want.

As long as you’re the person behind the metaphorical wheel, it’s still self-hosting.

Are people actually running their own actual self-hosted servers from home?

My lemmy instance is hosted in my basement

I’m sure the original spirit of selfhosting is actually owning the hardware (whether enterprise- or consumer-grade) but depending on your situation, renting a server could be more stable or cost effective. Whether you own the hardware or not, we all (more or less) have shared experiences anyway.

Where I live, there are some seasons wherein the weather could be pretty bad and internet or electricity outages can happen. I wouldn’t mind hours or even days of downtime for a service whose users are only myself or a few other people (i.e. non-critical services) like a private Jellyfin server, a Discord bot, or a game server.

For a public Lemmy server, I’d rather host it on the cloud where the hardware is located in a datacenter and I pay for other people to manage whatever disasters that could happen. As long as I make regular backups, I’m free to move elsewhere if I’m not satisfied with their service.

As far as costs go, it might be cheaper to rent VMs if you don’t need a whole lot of performance. If you need something like a dedicated GPU, then renting becomes much more expensive. Also consider your own electricity costs and internet bills and whether you’re under NAT or not. You might need to use Cloudflare tunnels or rent a VPS as a proxy to expose your homeserver to the rest of the world.

If the concern is just data privacy and security, then honestly, I have no idea. I know it’s common practice to encrypt your backups but I don’t know if the Lemmy database is encrypted itself or something. I’m a total idiot when it comes to these so hopefully someone can chime in and explain to us :D

For Lemmy hosting guides, I wrote one which you can find here but it’s pretty outdated by now. I’ve moved to rootless Docker for example. The Lemmy docs were awful at the time so I made some modifications based on past experiences with selfhosting. If you’re struggling with their recommended way of installing it, you can use my guide as reference or just ask around in this community. There’s a lot of friendly people who can help!

Yep, big ol’ case under my desk with some 20TB of storage space.

Most of what I host is piracy related 👀

how much of the 20tb is used?

Free space is wasted space

~19.5 tb of hardcore midget porn

~500 gigs of whale sounds to help me sleep

Whoops that was backwards, my bad

~19.5 tb of hardcore whale porn

~500 gigs of midget sounds to help me sleep

Whale sounds! Oh man, I’m gonna add that to ELF space radio and rain recordings!

There’s about 3.5 TB to go out of an actual 18 in the server.

I have another 2TB to install but it’s not in yet.

I’m also transcoding a lot of my media library to x265 to save space.

I don’t download for the sake of downloading, usually, and i delete stuff if I don’t see value in keeping it.

What is a good transcoder? I haven’t ran my media through one. Is the space saving significant? Did you lose video quality?

In order:

FFMPEG, yes, no.

I refer you to this comment for more info.

I have seen a saving of 60-80% per file on lower resolutions like 720 or 1080, which makes the server time well worth it.

A folder of 26 files totaling 61GB went down to 10.5, for example.

Some stuff is just better hosted in a proper data center. Like mail, DNS or a search engine. Some stuff, like sensitive data, is better hosted on your own hardware in your home.

There are definitely benefits on running a server at home but you could say the same of a VPS. As long as you control it, it is self hosted in my book.

Certain cloud providers are as secure, if not more secure, than a home lab. Amazon, Google, Microsoft, et al. are responding to 0-day vulnerabilities on the reg. In a home lab, that is on you.

To me, self-hosted means you deploy, operate, and maintain your services.

Why? Varied…the most crucial reason is 1) it is fun because 2) they work.

Listing Microsoft cloud after their recent certificate mess is an interesting choice.

Also, the “cloud responds to vulnerability” only works if you’re paying them to host the services for you - which definitely no longer is self hosting. If you bring up your own services the patching is on you, no matter where they are.

If you care about stuff like “have some stuff encrypted with the keys in a hardware module” own hardware is your only option. If you don’t care about that you still need to be aware that “cloud” or “VPS” still means that you’re sharing hardware with third parties - which comes with potential security issues.

Well with bare metal yes, but when your architecture is virtual, configuration rises in importance as the first line of defense. So it’s not just “yum —update” and reboot to remediate a vulnerability, there is more to it; the odds of a home lab admin keeping up with that seem remote to me.

Encryption is interesting, there really is no practical difference between cloud vs self hosted encryption offerings other than an emotional response.

Regarding security issues, it will depend on the provider but one wonders if those are real or imagined issues?

Well with bare metal yes, but when your architecture is virtual, configuration rises in importance as the first line of defense

You’ll have all the virtualization management functions in a separate, properly secured management VLAN with limited access. So the exposed attack surface (unless you’re selling VM containers) is pretty much the same as on bare metal: Somebody would need to exploit application or OS issues, and then in a second stage break out of the virtualization. This has the potential to cause more damage than small applications on bare metal - and if you don’t have fail over the impact of rebooting the underlying system after applying patches is more severe.

On the other hand, already for many years - and way before container stuff was mature - hardware was too powerful for just running a single application, so it was common to have lots of unrelated stuff there, which is a maintenance nightmare. Just having that split up into lots of containers probably brings more security enhancements than the risk of having to patch your container runtime.

Encryption is interesting, there really is no practical difference between cloud vs self hosted encryption offerings other than an emotional response.

Most of the encryption features advertised for cloud are marketing bullshit.

“Homomorphic encryption” as a concept just screams “side channel attacks” - and indeed as soon as a team properly looked at it they published a side channel paper.

For pretty much all the technologies advertised from both AMD and intel to solve the various problems of trying to make people trust untrustworthy infrastructure with their private keys sidechannel attacks or other vulnerabilities exist.

As soon as you upload a private key into a cloud system you lost control over it, no matter what their marketing department will tell you. Self hosted you can properly secure your keys in audited hardware storage, preventing key extraction.

Regarding security issues, it will depend on the provider but one wonders if those are real or imagined issues?

Just look at the Microsoft certificate issue I’ve mentioned - data was compromised because of that, they tried to deny the claim, and it was only possible to show that the problem exists because some US agencies paid extra for receiving error logs. Microsofts solution to keep you calm? “Just pay extra as well so you can also audit our logs to see if we lose another key”

The azure breach is interesting in that it is vs MSFT SaaS. We’re talking produce, ready to eat meals are in the deli section!

The encryption tech in many cloud providers is typically superior to what you run at home to the point I don’t believe it is a common attack vector.

Overall, hardened containers are more secure vs bare metal as the attack vectors are radically diff.

A container should refuse to execute processes that have nothing to do with container function. For ex, there is no reason to have a super user in a container, and the underlying container host should never be accessible from the devices connecting to the containers that it hosts.

Bare metal is an emotional illusion of control esp with consumer devices between ISP gateway and bare metal.

It’s not that self hosted can’t run the same level of detect & reject cfg, it’s just that I would be surprised if it was. Securing self hosted internet facing home labs could almost be its own community and is definitely worth a discussion.

My point is that it is simpler imo to button up a virtual env and that includes a virtual network env (by defn, cloud hosting).

The encryption tech in many cloud providers is typically superior to what you run at home to the point I don’t believe it is a common attack vector.

They rely on hardware functionality in Epyc or Xeon CPUs for their stuff - I have the same hardware at home, and don’t use that functionality as it has massive problems. What I do have at home is smartcard based key storage for all my private keys - keys can’t be extracted from there, and the only outside copy is a passphrase encrypted based64 printout on paper in a sealed envelope in a safe place. Cloud operators will tell you they can also do the equivalent - but they’re lying about that.

And the homomorphic encryption thing they’re trying to sell is just stupid.

Overall, hardened containers are more secure vs bare metal as the attack vectors are radically diff.

Assuming you put the same single application on bare metal the attack vectors are pretty much the same - but anybody sensible stopped doing that over a decade ago as hardware became just too powerful to justify that. So I assume nowadays anything hosted at home involves some form of container runtime or virtualization (or if not whoever is running it should reconsider their life choices).

My point is that it is simpler imo to button up a virtual env and that includes a virtual network env

Just like the container thing above, pretty much any deployment nowadays (even just simple low powered systems coming close to the old bare metal days) will contain at least some level of virtual networking. Traditionally we were binding everything to either localhost or world, and then going from there - but nowadays even for a simple setup it’s way more sensible to have only something like a nginx container with a public IP, and all services isolated in separate containers with various host only network bridges.

I like how you have a home smartcard. I can’t believe many do.

Why do you think cloud operators are lying?

I like how you have a home smartcard. I can’t believe many do.

Pretty much anyone should do. There’s no excuse to at least keep your personal PGP keys in some USB dongle. I personally wouldn’t recommend yubikey for various reasons, but there are a lot more options nowadays. Most of those vendors also now have HSM options which are reasonably priced and scale well enough for small hosting purposes.

I started a long time ago with empty smartcards and a custom card applet - back then it was quite complicated to find empty smartcards as a private customer. By now I’ve also switched to readily available modules.

Why do you think cloud operators are lying?

One of the key concepts of the cloud is that your VMs are not tied to physical hardware. Which in turn means the key storage also isn’t - which means extraction of keys is possible. Now they’ll tell you some nonsense how they utilize cryptography to make it secure - but you can’t beat “key extraction is not possible at all”.

For the other bits I’ve mentioned a few times side channel attacks. Then there’s AMDs encrypted memory (SEV) claiming to fully isolate VMs from each other, with multiple published attacks. And we have AMDs PSP and intels ME, both with multiple published attacks. I think there also was a published attack against the key storage I described above, but I don’t remember the name.

I agree that our stuff is unlikely to be victim of an targeted attack in the cloud - but could be impacted by a targeted attack on something sharing bare metal with you. Or somebody just managed to perfect one of the currently possible attacks to run them larger scale for data collection - in all cases you’re unlikely to be properly informed about the data loss.

I mean, as long as you patch regularly and keep backups, you should be good enough. That’s most of what responding to a zero day is, anyway, patching.

Most people who “self host” things are still doing it on a server somewhere outside their home. Could be a VPS, a cloud instance, colocated bare metal, …

My “server” is just my old gaming PC that I slapped Ubuntu on.

My “Server” is a small 32bit ARM Orangepi.

Server is a description oft function, formfactor. You are OK.

No that’s a homelab. Selfhosted applies to the software that you install and administrate yourself so you have full control over it. If it was about running hardware at home we’d see more posts about hardware.

I would say that a homelab is more about learning, developing, breaking things.

Running esoteric protocols, strange radio/GPS setups, setting up and tearing down CI/CD pipelines, autoscalers, over-complicated networks and storage arrays.

Whereas (self)hosting is about maintaining functionality and uptime.

You could self-host with hardware at home, or on cloud infra. Ultimately it’s running services yourself instead of paying someone else to do it.I guess self-hosting is a small step away from earning money (or does earn money). Reliable uptime, regular maintenance etc.

Homelabbing is just a money sink for fun, learning and experience. Perhaps your homelab turns into self-hosting. Or perhaps part of your self-hosting infra is dedicated to a lab environment.

Homelab is as much about software as it is about hardware. Trying new filesystems, new OSs, new deployment pipelines, whatever