Talking to a hallucination is, in fact, not good for you.

Who knew that “simulating” human conversations based on extruded text strings that have no basis in grounded reality or fact could send people into spirals of delusion?

Are companies who force employees to use LLMs going to be liable for the mental health issues they produce?

Should they be? Absolutely. Will they be? lol

deleted by creator

Good small talk tutorial. Terrible everything else

deleted by creator

Hey now, my walls are perfect companions, they may be silently judging me but they are always supportive and never sycophantic.

Don’t forget to check if the wall is load-bearing before relying on it for support.

One recent peer-reviewed case study focused on a 26-year-old woman who was hospitalized twice after she believed ChatGPT was allowing her to talk with her dead brother

I feel like the bar for the turing test is lower than ever… You can’t tell ChatGPT apart from your own relatives??

My cousin lost her young daughter a few years back. At Christmas, she had used AI to put her daughter in her Christmas photo. I didn’t have words, because it made her so happy, and I can’t fathom her grief, but man. Felt pretty fucked.

I feel you. I can’t deny the comfort it brought her, but I also can’t help but feel like it is training her to reject her grief.

Not that I’m in any position to pass judgement. I just hope it doesn’t lead to anything more severe.

So the developing psychosis could be causing the AI use?

That’s what the article says, yes:

“The technology might not introduce the delusion, but the person tells the computer it’s their reality and the computer accepts it as truth and reflects it back, so it’s complicit in cycling that delusion,” Sakata told the WSJ.

Thing that tells you exactly what you want to hear causes delusions?

Whaaat?

I completely understand why articles like this need to exist. Information about what ‘AI’ actually is needs to be spread. That being said, I also can’t remove myself from the impression that this is just incredibly obvious. Like one of those studies about whether a dog actually loves their owner by going to lengths such as an MRI of their brain while looking at their owner.

Like, thank you mystery researcher on the internet — but you could have saved the helium by just sticking to Occam’s Razor.

“Doctors say”!

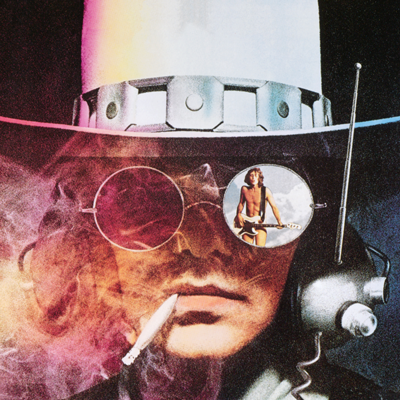

One could call it… Cyberpsychosis?

I’d say know your tools. People misusing “stuff” and being vulnerable to it in general is nothing new. Yet, in a lot of cases, we rely on independence and maturity in the decisions people make. This is no different to LLMs. However, of course meaningful (technological) safeguards should be implemented wherever possible.

By their own nature, there is no way to implement robust safeguards in a LLM. The technology is toxic and the best that could happen is anything else, hopefully not based on brute forcing the production of a stream of tokens, is developer and makes obvious LLMs are a false path, a road that should not be taken.

If AI is that dangerous, it should need a licence to use, same as a gun or car or heavy machinery.