Title is TLDR. More info about what I’m trying to do below.

My daily driver computer is Laptop with an SSD. No possibility to expand.

So for storage of lots n lots of files, I have an old, low resource Desktop with a bunch of HDDs plugged in (mostly via USB).

I can access Desktop files via SSH/SFTP on the LAN. But it can be quite slow.

And sometimes (not too often; this isn’t a main requirement) I take Laptop to use elsewhere. I do not plan to make Desktop available outside the network so I need to have a copy of required files on Laptop.

Therefor, sometimes I like to move the remote files from Desktop to Laptop to work on them. To make a sort of local cache. This could be individual files or directory trees.

But then I have a mess of duplication. Sometimes I forget to put the files back.

Seems like Laptop could be a lot more clever than I am and help with this. Like could it always fetch a remote file which is being edited and save it locally?

Is there any way to have Laptop fetch files, information about file trees, etc, located on Desktop when needed and smartly put them back after editing?

Or even keep some stuff around. Like lists of files, attributes, thumbnails etc. Even browsing the directory tree on Desktop can be slow sometimes.

I am not sure what this would be called.

Ideas and tools I am already comfortable with:

-

rsync is the most obvious foundation to work from but I am not sure exactly what would be the best configuration and how to manage it.

-

luckybackup is my favorite rsync GUI front end; it lets you save profiles, jobs etc which is sweet

-

freeFileSync is another GUI front end I’ve used but I am preferring lucky/rsync these days

-

I don’t think git is a viable solution here because there are already git directories included, there are many non-text files, and some of the directory trees are so large that they would cause git to choke looking at all the files.

-

syncthing might work. I’ve been having issues with it lately but I may have gotten these ironed out.

Something a little more transparent than the above would be cool but I am not sure if that exists?

Any help appreciated even just idea on what to web search for because I am stumped even on that.

You can mount remote with rclone and fine tune caching to your liking: https://rclone.org/commands/rclone_mount/#vfs-file-caching

This sounds like a setup that is broken in a few ways. First, you need to clean up the desktop. Start by replacing the USB drives. It is a bad idea to use them as they are more likely to fail and have limited speeds. I would get either moderately high RPM hard drives or sata SSDs. Both will be more reliable and have greater speed. Just make sure that they are properly mounted. This is especially important for HDDs as they are very sensitive to vibration and need to be kept still by a proper mount with rubber padding.

For the desktop itself, make sure it is fast enough to provide enough throughput. You should be using a CPU with at least 4 cores and DDR4 memory. The more memory you have the more data can be cached. I would go for 16 or 32gb and if you can go even higher. Also make sure you are using gigabit Ethernet with a gigabit switch.

Now for the software. I would install TrueNAS scale as it uses ZFS which is a create filesystem for data storage. TrueNAS can be a little tricky but it is all controllable though a web interface and if you need help guides can be found online.

For accessing the data, I would use either a SMB or NFS share. Smb tends to be simpler and has better cross platform support. NFS can be faster but it is tricky to configure. Make sure that your laptop has a Ethernet connection as doing things over WiFi is going to be slow. You might be able to get away with it if you have a newer wifi revision with a newer WiFi card.

If you are still looking to solve the original problem, you can use Syncthing. Syncthing has a few click install for TrueNAS and you can find guides on how to configure it in a way that works for you.

Maybe Syncthing is the way forward. I use it for years and am reasonably comfortable with it. When it works, it works. Problems is that when it doesn’t work, it’s hard to solve or even to know about. For the present use case it would involve making a lot of shares and manually toggling them on and off all the time. And would need to have some kind of error checking system also to avoid deleting unsynced files.

Others have also suggested NFS but I am having a difficult time finding basic info about what it is and what I can expect. How is it different than using SSHFS mounted? Assuming I continue limping along on my existing hardware, do you think it can do any of the local caching type stuff I was hoping for?

Re the hardware, thanks for the feedback! I am only recently learning about this side of computing. Am not a gamer and usually have had laptops, so never got too much into the hardware.

I have actually 2 desktops, both 10+ years old. 1 is a macmini so there is no chance of getting the storage properly installed. I believe the CPU is better and it has more RAM because it was upgraded when it was my main machine. The other is a “small” tower (about 14") picked up cheaply to learn about PCs. Has not been upgraded at all other than SSD for the system drive. Both running debian now.

In another comment I ran iperf3 Laptop (wifi) —> Desktop (ethernet) which was about 80-90MBits/s. Whereas Desktop —> OtherDesktop was in the 900-950 MBits/s range. So I think I can say the networking is fine enough when it’s all ethernet.

One thing I wasn’t expecting from the tower is that it only supports 2x internal HDDs. I was hoping to get all the loose USB devices inside the box, like you suggest. It didn’t occur to me that I could only get the system drive + one extra. I don’t know if that’s common? Or if there is some way to expand the capacity? There isn’t too much room inside the box but if there was a way to add trays, most of them could fit inside with a bit of air between them.

This is the kind of pitfall I wanted to learn about when I bought this machine so I guess it’s doing its job. :)

Efforts to research what I would like to have instead have led me to be quite overwhelmed. I find a lot of people online who have way more time and resources to devote than I do, who want really high performance. I always just want “good enough”. If I followed the advice I found online I would end up with a PC costing more than everything else I own in the world put together.

As far as I can tell, the solution for the miniPC type device is to buy an external drive holder rack. Do you agree? They are sooo expensive though, like $200-300 for basically a box. I don’t understand why they cost so much.

Simplify it down if you can. Forget NFS, SSHFS and syncthing as those are to complex and overkill at the moment. SMB is dead simple in a lot of ways and is hard to mess up.

I would start by figuring out how to get more drives in your desktop. SSDs can be put anywhere but HDDs need to be mounted. I would get two big disks and put them in raid 1 and then get a small disk for the boot drive. TrueNAS is still going to be the easiest route and TrueNAS scale is based on Debian under the hood although its designed to not be modified for reliability.

Forget the USB drives. I did that for a while and it is bad in so many ways. It will come back to haunt you even if the work fine for now.

For the desktop you always could upgrade the ram or CPU if that becomes the bottleneck. If the ram is 8gb or less you should upgrade it. Make sure you are getting ram that is fast enough to keep up with the CPU. You can find more information about your CPU and its ram speed online.

For your WiFi, it actually isn’t bad. You might be ok using it depending on what you are doing.

For the minipc I wouldn’t use it as a NAS. You can but it is probably going to be limiting.

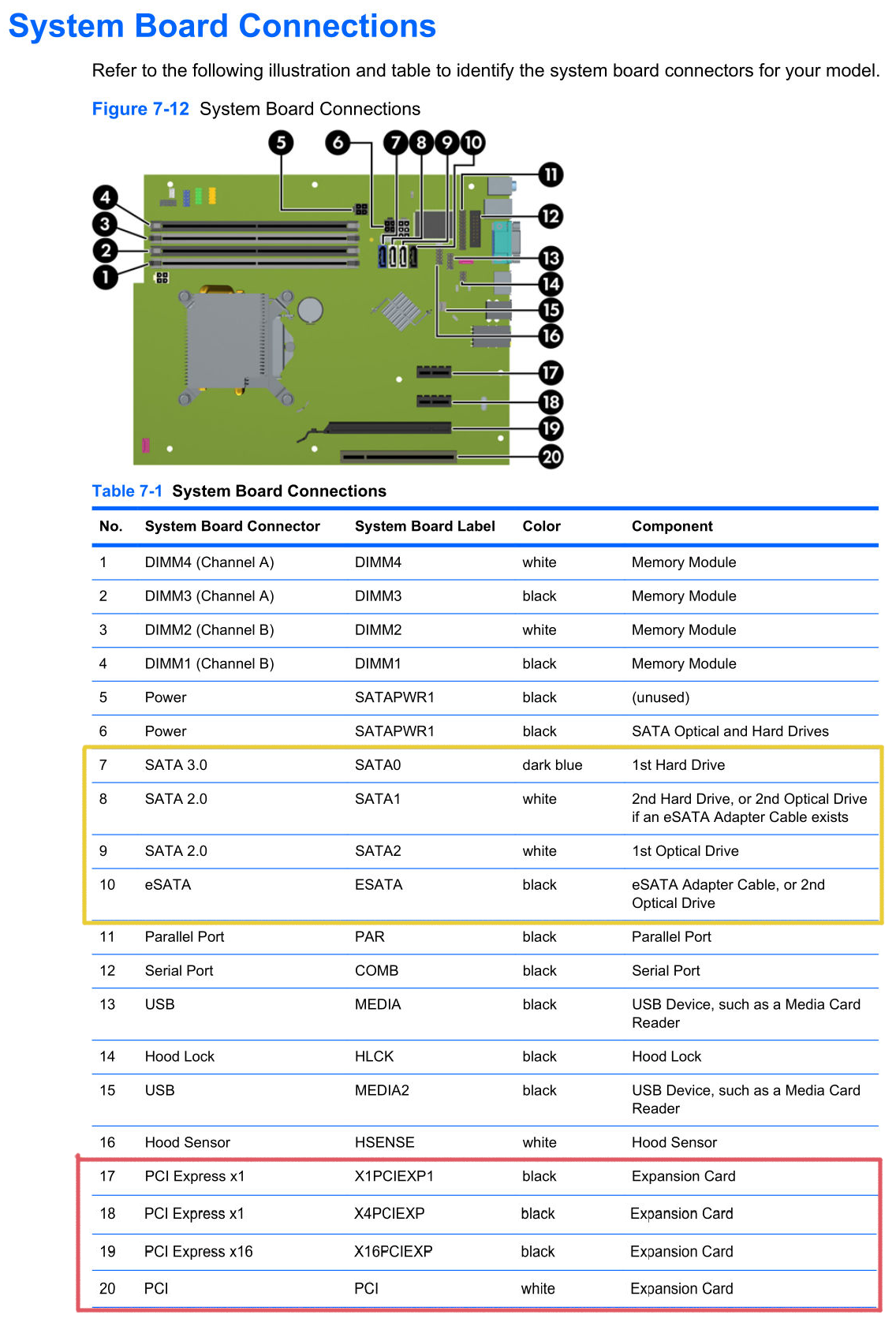

In case your desktop doesn’t have enough Sata ports you could get a ePCI sata card. They are pretty cheap ($10) and will potentially work better if your hardware is limited.

Forget NFS, SSHFS and syncthing as those are to complex and overkill at the moment. SMB is dead simple in a lot of ways and is hard to mess up.

OTOH, SSHFS and syncthing are already humming along and I’m framiliar with them. Is SMB so easy or having other benefits that would make it better even though I have to start from scratch? It looks like it (and/or NFS) can be administered from

cockpitweb interface which is cool.Now that I look around I think I actually have a bit of RAM I could put in the PC. MacMini’s original RAM which is DDR3L; but I read you can put it in a device that wants DDR3. So I will do that next time it’s powered off.

Thanks for letting me know I could use an expansion card. I was wondering about that but the service manual didn’t mention it at all and I had a hard time finding information online.

Is this the sort of thing I am looking for: SATA Card 4 Port with 4 SATA Cables, 6 Gbps SATA 3.0 Controller PCI Express Expression Card with Low Profile Bracket Support 4 SATA 3.0 Devices ($23 USD) I don’t find anything cheaper than that. But there are various higher price points. Assuming none of those would be worthwhile on a crummy old computer like I have. Is there any specific RAID support I should look for?

I have only the most cursory knowledge of RAID but can tell it becomes important at some point.

But am I correct in my understanding that putting storage device in RAID decreases the total capacity? For example if I have 2x6TB in RAID, I have 6 TB of storage right?

Honestly, more than half my data is stuff I don’t care too much about keeping. If I lose all the TV shows I don’t cry over it. Only some of it is stuff I would care enough to buy extra hardware to back up. Those tend to be the smaller files (like documents) whereas the items taking up a lot of space (media files) are more disposable. For these ones “good enough” is “good enough”.

I really appreciate your time already and anything further. But I am still wondering, to what extent is all this helping me solve my original question which is that I want to be able to edit remote files on Desktop as easily as if they were local on Laptop? Assuming i got it all configured correctly, is GIMP going to be just as happy with a giant file lots of layers, undos, etc, on the Desktop as it would be with the same file on Laptop?

Funny you found that card as I have the same one. I would only spend the extra money if your computer doesn’t have enough sata ports.

For raid you do not want hardware raid. It is generally problematic and doesn’t have any error correction. Also, if the controller were to die you could be in trouble.

The reason I say TrueNAS is that it makes the setup pretty straight forward. I have never used cockpit for file shares so I do not know if it works well or not. I do no it will require more setup and maintenance. For the software you could setup either a btrfs or ZFS raid 1 config. TrueNAS is ZFS only but it is turned for solid performance in a single job.

The reason I say raid 1 is not because it is a backup. Raid is not a backup and should not be treated as such. What it can offer is reduced downtime. If one drive fails you can simply swap out the drive. If you do not have a redundant copy you either need to recreate the data from a backup or try to do a data recovery operation. I would also recommend that you turn on snapshots as if it really handy to be able to roll back a change quickly. Snapshots are not complete backups but they are a form of version control.

As far as DDR3 goes, it is pretty slow and will not be able to come close to saturating a gigabit connection. Prepare to have slow speeds unfortunately. The good news is that if you get this setup and later decide it is time to upgrade then you can.

2 internal hdds

As I understand the PC case is some sort of small form factor and the motherboard has more SATA ports to plug in disks.

If that’s the case then try to find old pc case in the dumpster or from a neighbour. It should have more space for disks.

Some PCs 15 years ago had incompatible layouts so be a little cautious but gratis is a fair price.

Do you mean take the board out of this case and put it in another, bigger one?

I actually do have a larger, older tower that I fished out of the trash. Came with a 56k modem! But I don’t know if they would fit together. I also don’t notice anywhere particularly suitable to holding a bunch of storage; I guess I would have to buy (or make?) some pieces.

Here is the board configuration for the Small Form Factor:

I did try using #9 and #10 for storage and I seem to recall it kind of worked but didn’t totally work but not sure of the details. But hey, at least I can use a CD drive and a floppy drive at the same time!

As far as I can see you could try to attach 1 ssd to SATA0 (it can dangle in the air) and 2 hard drives to SATA1 and SATA2 (They are capped to 300MB/s and should be attached to the case with screws)

There should be space somewhere to put the drives as I can see in the specification. You will probably need to remove the CD drive.

There is also a question if power supply has enough power sockets to attach to the disks. You could try to find eSATA -> SATA adapter to attach something to the last port.

You probably won’t find the bigger case as motherboard isn’t standard size.

I would probably try to upgrade to standard motherboard size but I would also calculate the cost of consumed electricity in a year vs cost of more efficient hardware.

I have a very similar setup to you, and I use SyncThing without issue for the important files (which I keep in my Documents directory to make it easy to remember).

Easiest for this might be NextCloud. Import all the files into it, then you can get the NextCloud client to download or cache the files you plan on needing with you.

hmm interesting idea. I do not get the idea that nextcloud is reliably “easy” as it’s kind of a joke how complex it can be.

Someone else suggested WebDAV which I believe is the filesharing Nextcloud uses. Does Nextcloud add anything relevant above what’s available from just WebDAV?

I’d say mostly because the client is fairly good and works about the way people expect it to work.

It sounds very much like a DropBox/Google Drive kind of use case and from a user perspective it does exactly that, and it’s not Linux-specific either. I use mine to share my KeePass database among other things. The app is available on just about any platform as well.

Yeah NextCloud is a joke in how complex it is, but you can hide it all away using their all in one Docker/Podman container. Still much easier than getting into bcachefs over usbip and other things I’ve seen in this thread.

Ultimately I don’t think there are many tools that can handle caching, downloads, going offline, reconcile differences when back online, in a friendly package. I looked and there’s a page on Oracle’s website about a CacheFS but that might be enterprise only, there’s catfs in Rust but it’s alpha, and can’t work without the backing filesystem for metadata.

Nextcloud AIO in docker is dead simple and has been reliable for me.

The sync client is capable of syncing the whole tree as remote pointers that didn’t take space until you access the file then it downloads it local. You can also set files to always be local.

Nextcloud has a desktop app that can sync folders.

One of the few times I miss Files-on-demand for Win11. Connect an Office365 library with 500 GB to my laptop with an 128 GB harddrive. Integrates with file explorer, only caches local what you open, after a while you can “free space”, meaning deleting local cache version. NextCloud has the same on Win11 because its an OS feature.

sounds sweet! Perfectly what I am looking for.

It’s so rare to be jealous of windows users!!

I do find this repo: jstaf/onedriver: A native Linux filesystem for Microsoft OneDrive. So I guess in theory it would be possible in linux? If you could apply it to a different back end…

It’s certainly possible, if not else than with the use of FUSE. But I did not see such a project yet that can do this.

What you suggest sounds a lot like the “Briefcase” that was in Windows 9x. I don’t know of something similar, especially not something integrated into Linux.

The easiest way might be to setup SyncThing to share all of your different folders and then subscribe to those you need on your laptop. Just be aware that if you delete a file on your laptop it will also be deleted on your desktop on the next sync. Unsubscribe from the folder first before freeing up the disk space.

if you delete a file on your laptop it will also be deleted on your desktop on the next sync

This is my fear! I have done it before… Forgetting something is synced and deleting what I thought was “an extra copy” only to realize later that it propagated to the original.

If you’re on Linux I’d recommend using btrfs, or bcachefs with snapshots. It’s basically like time machine on MacOS. That way if you accidentally delete something you can still recover it.

-

What’s your network performance look like? 100mbit? Gigabit?

-

External drives are terribly slow, USB doesn’t have great throughout. Also they’re unreliable, do you have backups? I’d look into making those drives internal (SATA) which has much better throughput. I use one external drive on USB3 for duplication, and it’s noticeably slower on file transfers, like 40%.

-

For remote access to your files, look into Tailscale. You run it on your laptop and server (or any compatible device in your network), and it provides a virtual mesh network that functions like a LAN between devices.

-

Syncthing is great, but it just keeps files in, entire folders of them. So if space is tight on the laptop, it won’t really help, not easily anyway.

-

Resilio Sync has Selective Sync, where it can index a folder and store that index on any device participating in that sync job. Then you can select which files to sync at any time.

-

In another comment I ran iperf3 Laptop (wifi) —> Desktop (ethernet) which was about 80-90MBits/s. Whereas Desktop —> OtherDesktop was in the 900-950 MBits/s range. So I think I can say the networking is fine enough when it’s all ethernet. Is there some other kind of benchmarking to do?

-

Just posted a more detailed description of the desktops in this comment (4th paragraph). It’s not ideal but for now its what I have. I did actually take the time (

gnome-disksbenchmarking) to test different cables, ports, etc to find the best possible configuration. While there is an upper limit, if you are forced to use USB, this makes a big difference. -

Other people suggested ZeroTier or VPNs generally. I don’t really understand the role this component would be playing? I have a LAN and I really only want local access. Why the VPN?

-

Ya, I have tried using syncthing for this before and it involves deleting stuff all the time then re-syncing it when you need it again. And you need to be careful not to accidentally delete while synced, which could destroy all files.

-

Resilio I used it a long time ago. Didn’t realize it was still around! IIRC it was somewhat based on bittorrent with the idea of peers providing data to one another.

The VPN is to give you access to your files from anywhere, since you don’t have the storage capacity on your laptop for all of them.

If you have an encrypted connection to home, laptop storage isn’t a concern.

As a benefit, this also solves the risk of losing files that are only on the laptop, by keeping the at home.

Yea, Syncthing has it’s moments (and uses - I keep hundreds of gigs between 5 phones and 5 laptops/desktops in sync with it).

Resilio does use the bittorrent protocol, but uses keys and authorization for shares. Give it a try, it may address your need to access files remotely. I use it to access my media (about 2TB) which clearly can’t be sync’d to my laptop (or phone). I can grab any file, at any time.

-

-

You have two problems.

Transferring between your laptop and desktop is slow. There’s a bunch of reasons that this could be. My first thought is that the desktops got a slow 100mbps nic or not enough memory. You could also be using something that’s resource intensive and slow like zfs/zpools or whatever. It’s also possible your laptops old g WiFi is the bottleneck or that with everything else running at the same time it doesn’t have the memory to hold 40tb worth of directory tree.

Plug the laptop into the Ethernet and see if that straightens up your problems.

You want to work with the contents of desktop while away from its physical location. Use a vpn or overlay network for this. I have a complex system so I use nebula. If you just want to get to one machine, you could get away with just regular old openvpn or wireguard.

E: I just reread your post and the usb is likely the problem. Even over 2.0 it’s godawful. See if you can migrate some of those disks onto the sata connectors inside your desktop.

Not to mention USB drives have higher failure rates and can be unplugged by mistake.

Thanks!

I elaborated on why I’m using USB HDDs in this comment. I have been a bit stuck knowing how to proceed to avoid these problems. I am willing to get a new desktop at some point but not sure what is needed and don’t have unlimited resources. If I buy a new device, I’ll have to live with it for a long time. I have about 6 or 8 external HDDs in total. Will probably eventually consolidate the smaller ones into a larger drive which would bring it down. Several are 2-4TB, could replace with 1x 12TB. But I will probably keep using the existing ones for backup if at all possible.

Re the VPN, people keep mentioning this. I am not understanding what it would do though? I mostly need to access my files from within the LAN. Certainly not enough to justify the security risk of a dummy like me running a public service. I’d rather just copy files to an encrypted disk for those occasions and feel safe with my ports closed to outsiders.

Is there some reason to consider a VPN for inside the LAN?

You’re getting a lot of advice in this thread and it’s all pretty good, but not all of it seems to answer the problems you have in your order of priority or under your constraints. I’ll try to give an explanation of why I think my advice will do so then give it.

Getting off usb will speed up file access and increase the number of operations you can do from the laptop on your lan. Some stuff will still need to be copied over locally, normal people like us just can’t afford the kind of infrastructure that lets you do everything over the lan. For those things, rsync is perfectly good, and they’re most likely going to be enough of an edge case that it won’t be very often.

When you’re ready, and from your responses in this thread I’d say you are, a vpn doesn’t expose you to much security risk if any. There are caveats to that, but if you’re doing something like openvpn or wireguard it’s all encrypted and key based and basically ain’t nobody getting into it unless they were to get a key off an old computer you use and didn’t wipe before throwing out or something. That would solve your remote access bonus problem. No pressure and in your own time, of course.

You are me twenty years ago.

Cobbling together solutions from what’s available at the cost of the parts from the hardware store. Serial experiments lain but shot in the trailer park boys set. Hackers with the cast of my name is earl.

Don’t ever change.

So you want to kick the bad habit but don’t have enough physical space in your desktops case or enough sata ports! You have a bigger tower case but don’t know if it’ll really hold the drives you want.

The best bet is to transplant the motherboard and power supply from your sff desktop into the big case. If the big case has at least three 5 1/4 bays you can use a bracket to go from 3 big bays to 4 or 5 smaller 3.5” hdd bays. I’d recommend 4 instead of 5, more on that later.

If the big tower case has the little dangly 2x 3.5 bay cage hanging down from its cd cage, you can use four strips of sheet metal and a carpenters square (or the square corner of some copy paper) to make a column of hdd mounting space all the way to the floor of the chassis. Just remember to use vibration damping grommets.

Make sure when you’re filling your tower up with drives to put some fans blowing on em. Drives need to be kept cool for maximum life. Those 3 cd bay to 4 hdd bay adapter brackets are nice for that because they usually have a fan mount or one included.

Now you need sata (or maybe ide) ports to plug all these in. Someone else already said to use those cheap little sata expanders and those are great. I used an old cheap pc mounted in a salvaged case just like you might with four of em back in the day.

You’ll actually want to use the towers power supply if it has one and it works and matches the sff desktops connectors because it probably has more power capacity than the sff desktops supply. You may need some molex or sata splitters to get power to all your drives.

Consider mergerfs and snapraid once you have this wheezing Frankensteins monster operational.

Mergerfs displays all the drives as one big file system to the system and to users. So if drive one had /pics/dog.jpg and drive two had /pics/cat.jpg then the mergerfs of the two of them would have both pictures in the /pics directory when you open it.

Snapraid is like if raid5 (really zpools because you can have many parity devices) but it only runs once a day or whatever and is basically a snapshot.

Anyway sorry for the tangent. Post some pics of the tower case or its model number or whatever and I can give better advice about filling it with drives.

NFS and ZeroTier would likely work.

When at home NFS will be similar to a local drive, though a but slower. Faster than SSHFS. NFS is often used to expand limited local space.

I expect a cache layer on NFS is simple enough, but that is outside my experience.

The issue with syncing, is usually needing to sync everything.

What would be the role of Zerotier? It seems like some sort of VPN-type application. I don’t understand what it’s needed for though. Someone else also suggested it albeit in a different configuration.

Just doing some reading on NFS, it certainly seems promising. Naturally ArchWiki has a fairly clear instruction document. But I am having a ahrd time seeing what it is exactly? Why is it faster than SSHFS?

Using the Cache with NFS > Cache Limitations with NFS:

Opening a file from a shared file system for direct I/O automatically bypasses the cache. This is because this type of access must be direct to the server.

Which raises the question what is “direct I/O” and is it something I use? This page calls direct I/O “an alternative caching policy” and the limited amount I can understand elsewhere leads me to infer I don’t need to worry about this. Does anyone know otherwise?

The issue with syncing, is usually needing to sync everything.

yes this is why syncthing proved difficult when I last tried it for this purpose.

Beyond the actual files ti would be really handy if some lower-level stuff could be cache/synced between devices. Like thumbnails and other metadata. To my mind, remotely perusing Desktop filesystem from Laptop should be just as fast as looking through local files. I wouldn’t mind having a reasonable chunk of local storage dedicated to keeping this available.

NFS is generally the way network storage appliances are accessed on Linux. If you’re using a computer you know you’re going to be accessing files on in the long term it’s generally the way to go since it’s a simple, robust, high performance protocol that’s used by pros and amateurs alike. SSHFS is an abuse of the ssh protocol that allows you to mount a directory on any computer you can get an ssh connection to. You can think of it like VSCode remote editing, but it’ll work with any editor or other program.

You should be able to set up NFS with write caching, etc that will allow it to be more similar in performance to a local filesystem. Note that you may not want write caching specifically if you’re going to suddenly disconnect your laptop from the network without unmounting the share first. Your actual performance might not be the same, especially for large transfers, due to the throughput of your network and connection quality. In my general experience sshfs is kind of slow especially when accessing many different small files, and NFS is usually much faster.

Thanks this comment is v helpful. A persuasive argument for NFS and against sshfs!

ZeroTier allows for a mobile, LAN-like experience. If the laptop is at a café, the files can be accessed as if at home, within network performance limits.

If there is sufficient RAM on the laptop, Linux will cache a lot of metadata in other cache layers without NFS-Cache.

NFS-Cache is a specific cache for NFS, and does not represent all caching that can be done of files over NFS. “Direct I/O” is also a specific thing, and should not be generalized in the meanings of “direct” and “I/O”.

Let’s skip those entirely for now as I cannot simply explain either. I doubt either will matter in your use case, but look back if performance lags.

One laptop accessing one NFS share will have good performance on a quite local network.

NFS is an old protocol that is robust and used frequently. NFSv3 is not encrypted. NFSv4 has support for encryption. (ZeroTier can handle the encryption.)

SSHFS is a pseudo file system layered over SSH. SSH handles encryption. SSHFS is maybe 15 years old and is aimed at convenience. SSH is largely aimed at moving streams of text between two points securely. Maybe it is faster now than it was.

zerotier + rclone sftp/scp mount w/ vfs cache? I haven’t tried using vfs cache with anything other than a cloud mount but it may be worth looking at. rclone mounts work just as well as sshfs; zerotier eliminates network issues

What would be the role of Zerotier? It seems like some sort of VPN-type application. What do I need that for?

rclone is cool and I used it before. I was never able to get it to work really consistently so always gave up. But that’s probably use error.

That said, I can mount network drives and access them from within the file system. I think GVFS is doing the lifting for that. There are a couple different ways I’ve tried including with rclone, none seemed superior performance-wise. I should say the Desktop computer is just old and slow; there is only so much improvement possible if the files reside there. I would much prefer to work on my Laptop directly and move them back to Desktop for safe keeping when done.

“vfs cache” is certainly an intriguing term

Looks like maybe the main documentation is

rclone mount> vfs-file-caching and specifically--vfs-cache-mode-fullIn this mode the files in the cache will be sparse files and rclone will keep track of which bits of the files it has downloaded.

So if an application only reads the starts of each file, then rclone will only buffer the start of the file. These files will appear to be their full size in the cache, but they will be sparse files with only the data that has been downloaded present in them.

I’m not totally sure what this would be doing, if it is exactly what I want, or close enough? I am remembering now one reason I didn’t stick with rclone which is I find the documentation difficult to understand. This is a really useful lead though.

Zerotier + sshfs is something I use consistently in similar situations to yours - and yes, zerotier is similar to a vpn. Using it for a constant network connection makes it less critical to have everything mirrored locally. . . But I guess this doesn’t solve your speed issue.

I"m not an expert in rclone. I use it for connecting to various cloud drives and have occasionally used it for an alternative to sshfs. I"ve used vfs-cache for cloud syncs but not quite in the manner you are trying. I do see there is a vfs cache read-ahead option that might he|p? Agreed on the documentation, sometimes their forum helps.

- wifi is less responsive than ethernet

- You don’t even need encryption on local connection(no need for ssh) if it is never global.

- if you have that many external HDD, I would rather get a NAS and call it a day, it has everything configured for you as well.

- I don’t know what big data you access on, get a larger SSD to store whatever you might visit often from your HDDs.

Strong disagreement on №2. That kinda thinking is how you get devices on your home network to join a malicious botnet without your knowledge and more identity theft. ALL network communications should assume that a malicious actor may be present and use encryption-in-transit for anything remotely approaching private, identifiable, or sensitive.

Removed by mod

Be like Andrew Taint😎

On trial for assault, rape and human trafficking in multiple countries after being found on the scene at the raid of a sex slavery operation?

Why is VPN to your main device not an option?

I don’t know what that means

They mean to keep the files only on your desktop, keep it always on, and use VPN from your laptop to your desktop to access whatever files you want at that time from the desktop directly.

Nevermind. I read your question way wrong. You have a local network and want better access to things there.

Different filesystems and network service which exposes. SMB will be the worst performance, NFS possibly the best. You’re also going to have lag on however you’re accessing these files from the client, so maybe there’s an issue.

git-annex maybe?

Or just git depending on the use case.

Can you upgrade the desktop? What speed is your laptops WiFi?

I just use rsync manually until I have syncthig working but these don’t really solve slowdown issues and aren’t mounted. I would look into a better NIC and/or storage for the desktop or possibly your router.

Try using something like iperf to measure raw speed of the connection between your 2 systems, see if its what it should be (around 300-600mbps for wireless to wired locally) and try to narrow down where the bottleneck is.

A few weeks ago I put some serious time/brainpower into the network and got it waaaay smoother and faster than before. Finally implemented some upgraded hardware that has been sitting on a shelf for too long.

I tried iperf. Actually iperf3 because that’s the first tutorial I found. Do you have any opinion on iPerf vs iperf3? On Desktop I ran:

iperf3 -s -p 7673On Laptop I am currently doing some stuff I didn’t want to quit so this may not be a totally fair test. I’ll try re running it later. That said I ran:

iperf3 -c desktop.lan -p 7673 -bidirAnd what looks like a summary at the bottom:

[ ID] Interval Transfer Bitrate Retr [ 5] 0.00-10.00 sec 102 MBytes 86.0 Mbits/sec 152 sender [ 5] 0.00-10.00 sec 102 MBytes 85.6 Mbits/sec receiverI actually have AnotherDesktop on the LAN also connected via ethernet. Going from Laptop —> AnotherDesktop gets similar to the above.

However going AnotherDesktop —> Desktop gets 10x better results:

[ 5] 0.00-10.00 sec 1.09 GBytes 936 Mbits/sec 0 sender [ 5] 0.00-10.00 sec 1.09 GBytes 933 Mbits/sec receiverLaptop has Intel Dual Band Wireless-AC 8260 who’s Max Speed = 867 Mbps. It probably isn’t the bottleneck. Although with the distro running at the moment (Fedora) I have a LOT of problems with everything so possibly things aren’t set up ideally here.

I still didn’t upgrade the actual wireless access point for the network; don’t recall what the max speed is for current WAP but could be around 100Mbps.

So this is an interesting path to optimize. However I am still interested in solving the original problem because even when I am directly using Desktop, things are slow. I do not really want to upgrade it is I can get away with a software solution. There are many items on my list of projects and purchases that I’d rather concentrate on.

Wifi is always the bottleneck.

A few ideas/hints: If you are up for some upgrading/restructuring of storage, you could consider a distributed filesystem: https://wikiless.org/wiki/Comparison_of_distributed_file_systems?lang=en.

Also check fuse filesystems for weird solutions: https://wikiless.org/wiki/Filesystem_in_Userspace?lang=en

Alternatively perhaps share usb drives from ‘desktop’ over ip (https://www.linux.org/threads/usb-over-ip-on-linux-setup-installation-and-usage.47701/), and then use bcachefs with local disk as cache and usb-over-ip as source. https://bcachefs.org/

If you decide to expose your ‘desktop’, then you could also log in remote and just work with the files directly on ‘desktop’. This oc depends on usage pattern of the files.