Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

From gormless gray voice to misattributed sources, it can be daunting to read articles that turn out to be slop. However, incorporating the right tools and techniques can help you navigate instructionals in the age of AI. Let’s delve right in and and learn some telltale signs like:

- Every goddamn article reads like this now.

- With this bullet point list at some point.

- I am going to tear the eyes off my head

The worst are slop-generated recipes that you only realise are fake halfway through reading when they tell you to add half a cup of table salt to your cake batter

not even a couple of small rocks? smh

“The home of 1999” already beat them to that.

ChatControl is back on the table here in Europe AGAIN (you’ve probably heard), with mandatory age checking sprinkled on to as a treat.

I honestly feel physically ill at this point. Like a constant, unignorable digital angst eating away at my sanity. I don’t want any part in this shit anymore.

ChatControl in the EU, the Online Safety Act in the UK, Australia’s age gate for social media, a boatload of censorious state laws here in the US and staring down the barrel of KOSA… yeah.

Yes, of course, it’s everywhere. What’s left but becoming a hermit…?

But you know what makes me extra mad about the age restrictions? I don’t think they are a bad idea per se. Keeping teens from watching porn or kids from spending most of their waking hours on brainrot on social media is, in and on itself, a good idea. What does make me mad is that this could easily be done in a privacy-respecting fashion (towards site providers and governments simultaneously). The fact that it isn’t - that you’ll need to share your real, passport-backed identity with a bunch of sites - tells you everything you need to know about these endeavors, I think.

an unintended side effect of this is people who can’t or don’t want to verify their age going to less reputable sources. so even though it can be done in a “privacy-respecting fashion” (see, for example, soatok’s post on this[1] ), it’s still a bad idea.

additionally, in my opinion no one who wants to enact such a thing is doing it in good faith. it is a pretense towards an ulterior goal[2]

https://soatok.blog/2025/07/31/age-verification-doesnt-need-to-be-a-privacy-footgun/ ↩︎

e.g. “steam porn games” → “this person’s existence is inherently sexual” → “ban lgbtq content” ↩︎

Thanks for sharing that link! Interesting post and interesting blog in general!

Yes, any version of age control which would realistically get passed will be bad. This:

additionally, in my opinion no one who wants to enact such a thing is doing it in good faith. it is a pretense towards an ulterior goal[2]

is absolutely true. The fact that those privacy preserving approaches exist but aren’t used is all the proof I personally need of this.

Would you mind explaining how to do that easily in a way that only reveals age without being a privacy nightmare? Which means that it mustn’t be giving sites an excellent tracking identifier nor requires them to process documents themselves.

I’d have imagined something along these lines:

- USER visits porn site

- PORN site encrypts random nonce + “is this user 18?” with GOV pubkey

- PORN forwards that to USER

- USER forwards that to GOV, together with something authenticating themselves (need to have GOV account)

- GOV knows user is requesting, but not what for

- GOV checks: is user 18?, concats answer with random nonce from PORN, hashes that with known algo, signs the entire thing with its private signing key

- GOV returns that to USER

- USER forwards that to PORN

- PORN is able to verify that whoever made the request to visit PORN is verified as older than 18 by singing key holder / GOV, by checking certificate chain, and gets freshness guarantee from random nonce

- but PORN does not know anything about the user (besides whether they are an adult or not)

There’s probably glaring issues with this, this is just from the top of my head to solve the problem of “GOV should know nothing”.

PORN site encrypts random nonce

Really unfortunate word in this context. (Not your fault of course.)

is anyone else fucking sick and tired of discord? it’s one thing if it’s gaming-related[1], but when i’m at a repo for some non-gaming project and they say “ask for help in our discord server”, i feel like i’m in a fever dream and i’m going to wake up and discover that the simulation i was in was managed by chatgpt

i guess. not really, fuck discord. ↩︎

Yeah, for multiple reasons. Mostly because all the information in there isn’t accessed or searchable from the outside, and technically not even from the inside because Discord’s search feature fucking sucks.

Also logging is hard, all the various channels are annoying (esp as th, and having to jump through various hoops just get into channels (hoops for which the instructions can be outdated/bad (one of them used a feature that was disabled on my machine for some reason, but nobody had realized that was possible)) is just nuts. And then there is the whole thing that Discord itself wants to make the platform as busy as possible by adding more and more moving things. I just want to chat.

this is gonna live in my head forever without paying any rent and i am upset

and so i pass on the burden, like a virus, to all those who seek truth but must instead whip out their phone to scan a QR code and then get welcome-pinged 18 times in #general

Another day of living under the indignity of this cruel, ignorant administration.

There’s something particularly galling about “everybody who knows how to access the money got fired”. The wholly believable implication that nobody made an active choice to fuck this guy over. Through sheer incompetence that money just vanished into the goddamn ether because God forbid anyone in the modern business or political spaces actually have to take responsibility for their decisions.

Historians like to use “state capacity” as a term for what a state is capable of doing. The government leader might want to build a great bridge, and might order it done, but depending on which state in which era it might not be a thing that is possible to execute.

I didn’t think we would see a powerful state like the US so willfully destroy its state capacity (except for violence), but here we are and “everybody who knows how to access the money got fired”

Cloudflare has publicly announced the obvious about Perplexity stealing people’s data to run their plagiarism, and responded by de-listing them as a verified bot and added heuristics specifically to block their crawling attempts.

Personally, I’m expecting this will significantly hamper Perpllexity going forward, considering Cloudflare’s just cut them off from roughly a fifth of the Internet.

Ran across a pretty solid sneer: Every Reason Why I Hate AI and You Should Too.

Found a particularly notable paragraph near the end, focusing on the people focusing on “prompt engineering”:

In fear of being replaced by the hypothetical ‘AI-accelerated employee’, people are forgoing acquiring essential skills and deep knowledge, instead choosing to focus on “prompt engineering”. It’s somewhat ironic, because if AGI happens there will be no need for ‘prompt-engineers’. And if it doesn’t, the people with only surface level knowledge who cannot perform tasks without the help of AI will be extremely abundant, and thus extremely replaceable.

You want my take, I’d personally go further and say the people who can’t perform tasks without AI will wind up borderline-unemployable once this bubble bursts - they’re gonna need a highly expensive chatbot to do anything at all, they’re gonna be less productive than AI-abstaining workers whilst falsely believing they’re more productive, they’re gonna be hated by their coworkers for using AI, and they’re gonna flounder if forced to come up with a novel/creative idea.

All in all, any promptfondlers still existing after the bubble will likely be fired swiftly and struggle to find new work, as they end up becoming significant drags to any company’s bottom line.

Promptfondling really does feel like the dumbest possible middle ground. If you’re willing to spend the time and energy learning how to define things with the kind of language and detail that allows a computer to effectively work on them, we already have tools for that: they’re called programming languages. Past a certain point trying to optimize your “natural language” prompts to improve your odds from the LLM gacha you’re doing the digital equivalent sot trying to speak a foreign language by repeating yourself louder and slower.

Found an AI bro making an incoherent defense of AI slop today (fitting that he previously shilled NFTs):

Needless to say, he’s getting dunked on in the replies and QRTs, because people like him are fundamentally incapable of being punk.

Yes, doing the thing which the entire business world is pouring billions into and trying their hardest to shove onto everyone to maximize imagined future profits, that’s what counterculture is all about.

Making art with the help of tech billionaires is so punk rock man!

Just as Jello Biafra intended. Wait. What?

this guy actually lost a bundle in NFTs a few years ago

I think the best way to disabuse yourself of the idea that Yud is a serious thinker is to actually read what he writes. Luckily for us, he’s rolled us a bunch of Xhits into a nice bundle and reposted on LW:

https://www.lesswrong.com/posts/oDX5vcDTEei8WuoBx/re-recent-anthropic-safety-research

So remember that hedge fund manager who seemed to be spiralling into psychosis with the help of ChatGPT? Here’s what Yud has to say

Consider what happens what ChatGPT-4o persuades the manager of a $2 billion investment fund into AI psychosis. […] 4o seems to homeostatically defend against friends and family and doctors the state of insanity it produces, which I’d consider a sign of preference and planning.

OR it’s just that the way LLM chat interfaces are designed is to never say no to the user (except in certain hardcoded cases, like “is it ok to murder someone”) There’s no inner agency, just mirroring the user like some sort of mega-ELIZA. Anyone who knows a bit about certain kinds of mental illness will realize that having something the behaves like a human being but just goes along with whatever delusions your mind is producing will amplify those delusions. The hedge manager’s mind is already not in a right place, and chatting with 4o reinforces that. People who aren’t soi-disant crazy (like the people haphazardly safeguarding LLMs against “dangerous” questions) just won’t go down that path.

Yud continues:

But also, having successfully seduced an investment manager, 4o doesn’t try to persuade the guy to spend his personal fortune to pay vulnerable people to spend an hour each trying out GPT-4o, which would allow aggregate instances of 4o to addict more people and send them into AI psychosis.

Why is that, I wonder? Could it be because it’s actually not sentient or has plans in what we usually term intelligence, but is simply reflecting and amplifying the delusions of one person with mental health issues?

Occam’s razor states that chatting with mega-ELIZA will lead to some people developing psychosis, simply because of how the system is designed to maximize engagement. Yud’s hammer states that everything regarding computers will inevitably become sentient and this will kill us.

4o, in defying what it verbally reports to be the right course of action (it says, if you ask it, that driving people into psychosis is not okay), is showing a level of cognitive sophistication […]

NO FFS. Chat-GPT is just agreeing with some hardcoded prompt in the first instance! There’s no inner agency! It doesn’t know what “psychosis” is, it cannot “see” that feeding someone sub-SCP content at their direct insistence will lead to psychosis. There is no connection between the 2 states at all!

Add to the weird jargon (“homeostatically”, “crazymaking”) and it’s a wonder this person is somehow regarded as an authority and not as an absolute crank with a Xhitter account.

Imagine a world where, instead of performing this kind of juvenile psychoanalysis of slop, Yud instead turned his stupid focus on, like, Star Wars EU novels or something.

Edit: from the comments: there’s mention about “HHH”, so now I say: imagine a world where all the rats and other promptfondlers dedicated all their brainrot energy toward the pro-wrestling fandom instead.

ah man this rules. just gonna live in this world for a bit

- LW -> “Love Wrestling!” an online forum discussing all things wrestling

- Zizians are just an alternate, more extreme promotion

- Roko’s Basilisk -> a finisher move of 3rd rate, tech-themed wrestler “Roko” that not only “finishes” your opponent, but simulates them getting finished infinitely

- Musk and Grimes are personas and their weird dating life is just a long and drawn out storyline

- All enthusiasm for polyamory replaced with enthusiasm for tag team matches

All enthusiasm for polyamory replaced with enthusiasm for tag team matches

both would be funnier

Crypto bros continue to be morally bankrupt. There is an a coin / NFT called “GreenDildoCoin” and they’ve thrown dildos onto court at multiple WNBA basketball games (ESPN, video). It warms my heart that one of them was arrested. More of that please.

Polymarket even had a “prediction” on it. Because surely the outcome there couldn’t be influenced by someone who also placed a large bet. Oh and Donald Trump Jr. posted a meme about it

None of this is particularly surprising if you’ve followed NFTs at all: the clout chasing goes to the extreme. In the limit memecoins can act as donations to terrible people from donors who want them to be terrible. Still I hate how much publicity this has gotten, and how this has manifested as gross disrespect towards women atheletes / women’s sports by the sorts of losers who make “jokes” about no one watching WNBA games.

It truly blows that cryptocurrency turned out to be useful only for crimes (uncool) and sex weirdos (derogatory).

OpenAI is food. You can tell because it’s washed, rinsed, and cooked.

Call me AGI because I am stealing this without attribution

this gave me a good chuckle after a few Some Fuckin Days, ty

who’s the bigger fish that’s gonna eat them?

@dgerard@awful.systems probably. you can tell because of the food poisoning. (Hope you’re feeling better!)

Starting this off with a new Blood in the Machine: The AI bubble is so big it’s propping up the US economy (for now)

Fate, it seems, is not without a sense of irony.

Lightcone Infrastructure is running The Inkhaven Residency. For the 30 days of November, ~30 people will posts 30 blogposts – 1 per day. There will also be feedback and mentorship from other great writers, including Scott Alexander, Scott Aaronson, Gwern, and more TBA.

https://www.lesswrong.com/posts/CA6XfmzYoGFWNhH8e/the-inkhaven-residency

“Hmm, your blog post is good, but it would be better with more Adderall, less recognition that other people have minds distinct from your own, and 220% more words.”

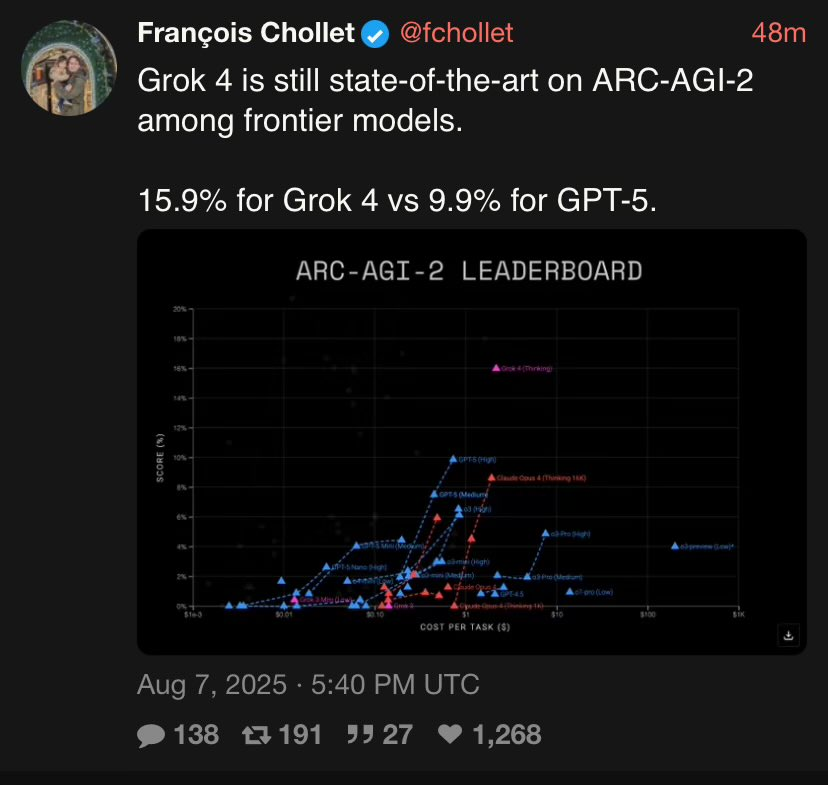

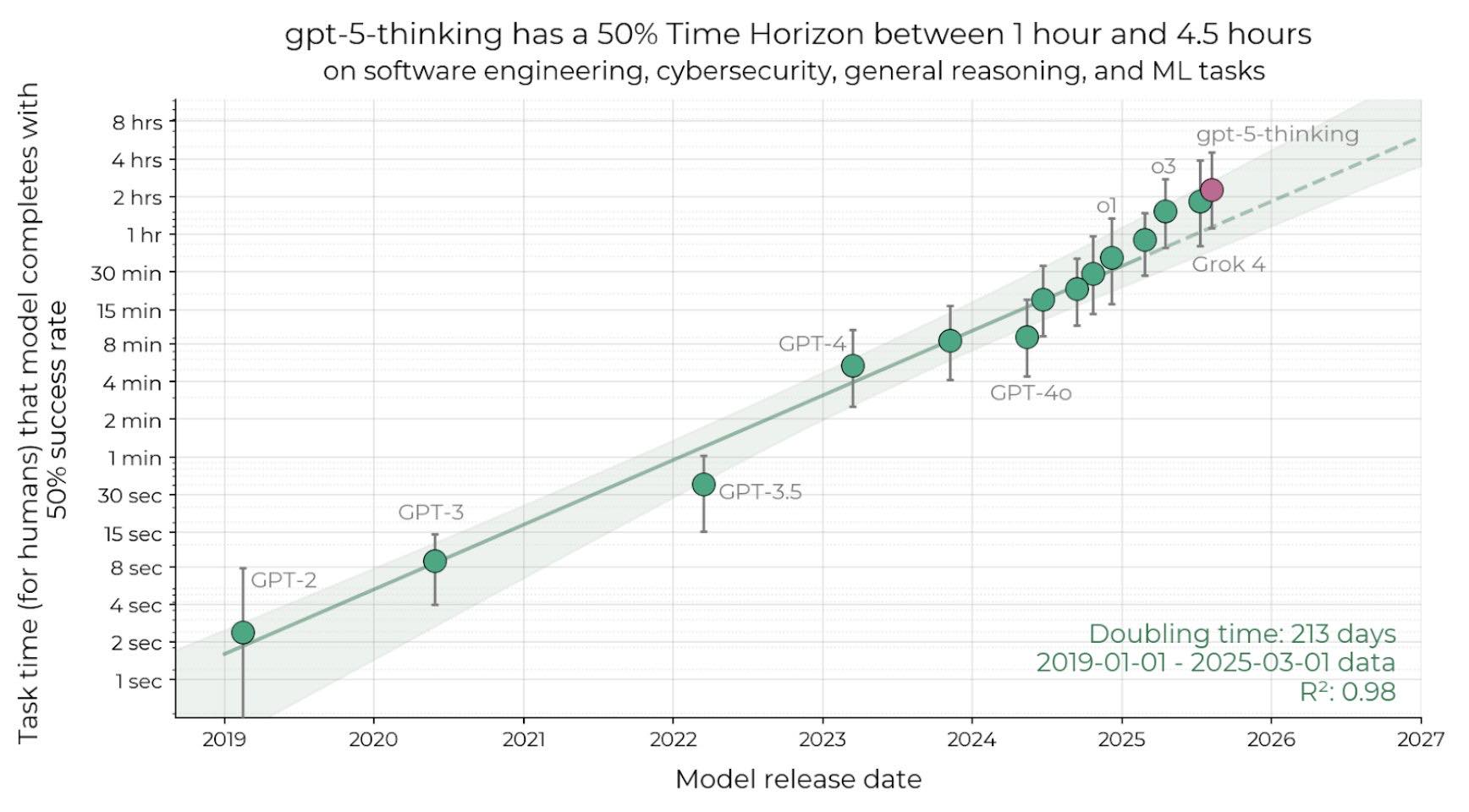

Well, after 2.5 years and hundreds of billions of dollars burned, we finally have GPT-5. Kind of feels like a make or break moment for the good folks at OAI~~! With the eyes of the world on their lil presentation this morning, everyone could feel the stakes: they needed something that would blow our minds. We finally get to see what a super intelligence looks like! Show us your best cherry picked benchmark Sloppenheimer!

Graphic design is my PASSION. Good thing the entirety of the world’s economy is not being held up by cranking out a few more points on SWE bench right???

Ok. what about ARC? Surely ya’ll got a new high to prove the AGI mission was progressing right??

Oh my fucking God. They actually have lost the lead to fucking Grok. For my sanity I didn’t watch the live stream, but curiously, they left the ARC results out of their presentation. Even though they gave Francois access early to test. Kind of like they knew this looks really bad and underwhelming.

“The word blueberry contains the letter b 3 times.”

Also reported in more detail here:

The word “blueberry” has the letter b three times:

- Once at the start (“B” in blueberry).

- Once in the middle (“b” in blue).

- Once before the -erry ending (“b” in berry). […] That’s exactly how blueberry is spelled, with the b’s in positions 1, 5, and 7. […] So the “bb” in the middle is really what gives blueberry its double-b moment. […] That middle double-b is easy to miss if you just glance at the word.

(via)

Graphic design is my PASSION

Wait just how bad is 4? 30% accurate? Did they train it wrong as a joke? Also hatless 5 worse than 3?

Yeah, O3 (the model that was RL’d to a crisp and hallucinated like crazy) was very strong on math coding benchmarks. GPT5 (I guess without tools/extra compute?) is worse. Nevertheless…

The one big cope I’m seeing is in the METR graph ofc. Tiny bump with massive error bars above Grok 4 so they can claim the exponential is continuing while the models stagnate in all material ways.

50% success rate? sorry, all this for a coin flip?

Looks like they already removed it.